TL;DR

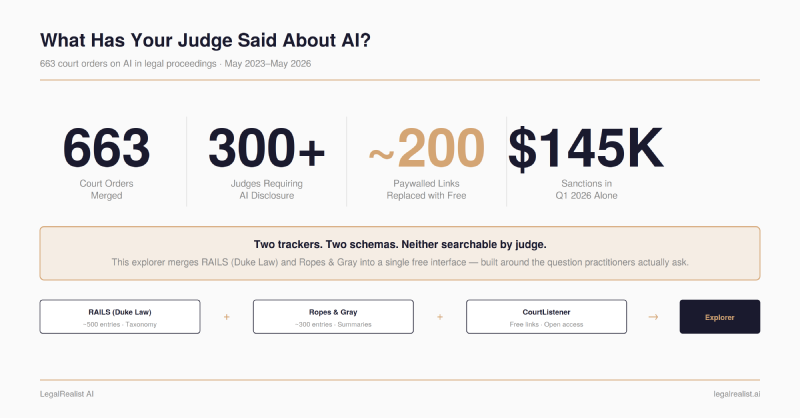

- Neither leading tracker lets you search by judge. Practitioners don’t look up AI orders by “order type” or “jurisdiction.” They need to know what their judge requires before this filing. The existing tools weren’t built around that question.

- 663 orders show a judiciary that’s still making it up as it goes. Most orders require disclosure. Some require certification. A few ban AI outright. The only consistent pattern is inconsistency — and sanctions are escalating faster than the rules are standardizing.

- ~200 paywalled links replaced with free alternatives. Both trackers link to Westlaw and Lexis. We used CourtListener’s API to swap in free links wherever the order was available.

- The full data pipeline is open-source. Claude Haiku for classification enrichment, CourtListener’s API for link replacement, vanilla JavaScript for the interface. Pipeline and data on GitHub.

- Search your judge before your next filing. The explorer is free at legalhack.io/data/ai-court-orders. Check standing orders, then verify directly with the court.

The Compliance Patchwork#

A lawyer filing in the Southern District of New York faces different AI disclosure requirements than one filing in the Northern District of Texas. Multiply that across 663 orders issued by courts in nearly every state between May 2023 and May 2026, and you have the current landscape: a compliance patchwork with no central index and no uniform rules.

Two trackers have done the hard work of cataloguing these orders. RAILS (Responsible AI in Legal Services), a Duke Law initiative launched in March 2024, built the richest taxonomy in the space — classifying each order by type, requirements, and who it applies to, with downloadable raw data. RAILS stopped updating in May 2025 and now directs users to Ropes & Gray. The Ropes & Gray AI Court Order Tracker, launched in January 2024 and actively maintained, has grown past 550 entries with enhanced search and filtering added in May 2026. Both are valuable resources. Neither lets you search by judge, group results by judge, or click through to the actual order without a Westlaw or Lexis subscription.

We wanted to fix that. So we merged both datasets, replaced the paywalled links, and built a free interface organized around the question practitioners actually ask.

What 663 Orders Tell Us#

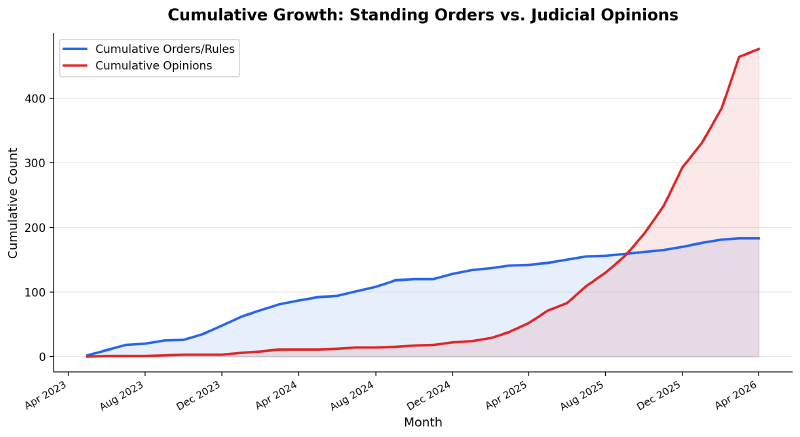

The merged dataset covers three years of judicial responses to AI in legal proceedings, from the first standing orders in May 2023 through May 2026.

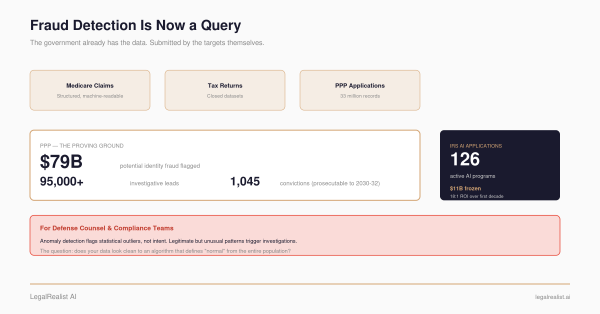

The volume is accelerating. The first AI court orders trickled in after Mata v. Avianca in June 2023, where two attorneys were sanctioned for submitting a brief with fabricated citations generated by ChatGPT. By late 2024, the pace had picked up to a few per month. By 2026, Ropes & Gray reports 10 to 15 new decisions per week. The merged dataset includes orders from over 300 federal and state judges requiring some form of AI disclosure.

Most orders require disclosure, not prohibition. The dominant approach is a certification requirement: attorneys must state whether generative AI was used in preparing a filing and, if so, confirm that a human verified all citations and legal arguments. A smaller number of courts prohibit AI use in filings altogether, or require attorneys to certify that AI was not used. A handful ask for specifics about which tools were used and how. The Fifth Circuit declined to adopt a proposed AI rule, and at least one judge rescinded his own standing order after finding it unnecessary and slightly burdensome — evidence that the judiciary is still debating how heavy-handed to be.

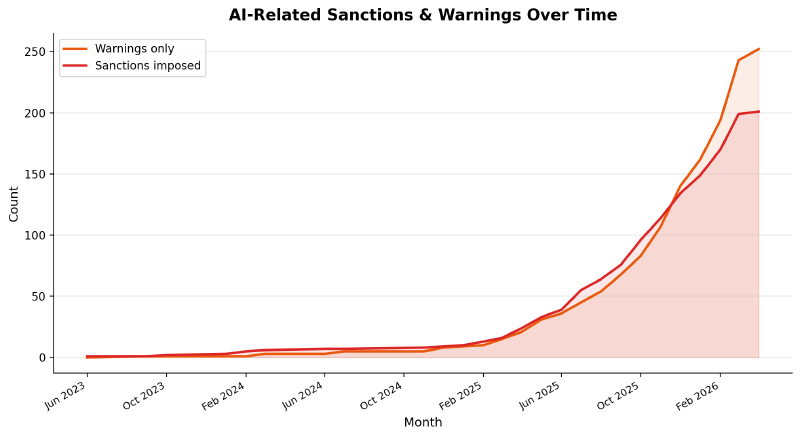

Sanctions are escalating. Courts imposed at least $145,000 in AI-related sanctions in Q1 2026 alone — including a $109,700 combined penalty in Oregon (the largest against a single attorney) and a $30,000 Sixth Circuit fine (the first substantial federal appellate sanction for AI- fabricated citations). In Nebraska, a disciplinary body recommended temporary suspension of an attorney whose Supreme Court brief contained 57 errors out of 63 citations. The sanctions aren’t for using AI. They’re for submitting unverified output: fabricated cases, invented quotations, misrepresented holdings.

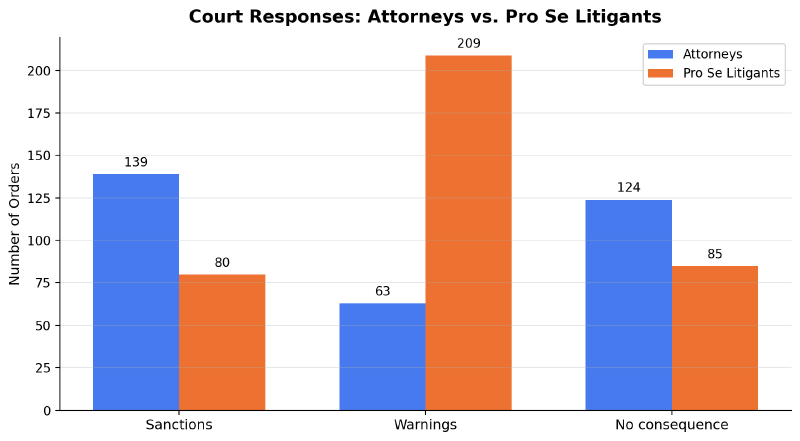

Pro se litigants get more leeway. Courts treat unrepresented parties differently than attorneys when AI-generated filings go wrong. Attorneys face sanctions at nearly twice the rate of pro se litigants (139 vs. 80), while pro se litigants receive warnings more than three times as often (209 vs. 63). The pattern is consistent with how courts have historically treated pro se filings: held to a lower standard of professional responsibility, with more room for correction before punishment.

Only 21 orders actually prohibit AI — and most are early. Of 663 orders, just 3% ban AI use outright. The majority are standing orders issued in 2023 and early 2024, when courts were still gauging the risk. Most carve out standard legal research tools like Westlaw and Lexis, targeting generative AI specifically. By mid-2025, the trend had shifted decisively toward disclosure and certification rather than blanket prohibitions. Of the more recent entries tagged as prohibitions, several are judicial opinions warning against AI use in a specific case rather than proactive bans — reactive, not structural.

The geographic distribution is uneven. Texas, New York, California, and Illinois account for a disproportionate share of orders. Some states have no AI-specific court orders at all. The choropleth map in the explorer makes this visible at a glance — click a state to filter to its orders and judges.

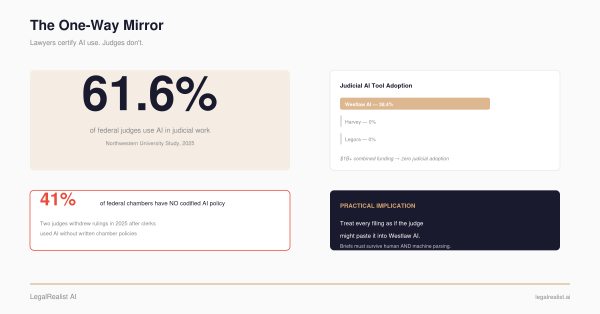

Judges are using AI too. A Northwestern University survey of 502 federal judges, published in the Sedona Conference Journal in early 2026, found that 61.6% reported using AI tools in their judicial work — primarily for legal research and document review. That’s the same category of work that gets attorneys sanctioned when the AI produces errors. The asymmetry between the verification standards courts enforce and the practices the bench has adopted is, for now, unresolved.

What We Built#

The AI Court Orders Explorer merges both trackers into a single interface designed around one question: has my judge issued an AI standing order? The underlying data collection was done by RAILS and Ropes & Gray. This project adds the merge, the enrichment, and an interface organized by judge rather than by document.

The dataset combines RAILS’s ~500 entries with Ropes & Gray’s ~300, standardized into a common schema and deduplicated on case name, court, and date — 663 unique entries, more than either source alone. Where Ropes & Gray entries lacked RAILS’s richer taxonomy (order type, requirements, applicable-to categories), Claude Haiku classified them to fill the gaps.

The interface is built around judges. Search a name and see every AI-related order that judge has issued, displayed chronologically with type badges, requirement pills, consequence tags, summaries, and links to the actual order. An SVG choropleth map shows order density by state — click a state to filter. A multi-filter system narrows by type, state, outcome, sector, and boolean attributes like disclosure requirements, AI prohibitions, or sanctions.

Twelve interactive charts visualize the full dataset: cumulative growth of orders over time, a sanctions timeline, geographic distribution, document types, requirements breakdowns, consequence severity, and enforcement patterns.

The Paywall Problem#

Both trackers link to Westlaw or Lexis for the underlying orders. For a BigLaw associate with institutional subscriptions, that’s transparent. For a solo practitioner, a legal aid attorney, a law student, or a pro se litigant — exactly the people most likely to need a quick compliance check before a filing — it’s a wall.

The orders themselves are public records. The trackers are public resources. The barrier is in the links.

CourtListener, operated by the nonprofit Free Law Project, hosts over 10 million legal opinions from federal and state courts, all free and searchable. Our pipeline searched CourtListener’s API using multiple strategies — docket number lookup, case name plus court matching, opinion search, and broad fallback — and replaced approximately 200 paywalled links with free alternatives. Not every order is on CourtListener, but most entries now link to something a practitioner can read without a subscription.

How We Built It#

The data pipeline is open-source.

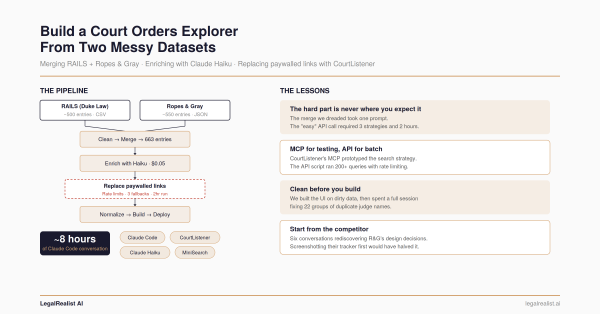

RAILS publishes its data as a CSV. Ropes & Gray’s tracker exposes JSON. Two cleaning scripts standardized both into a common schema — normalizing field names, date formats, and category labels. Deduplication merged on case name, court, and date, producing 663 unique entries from the combined set.

The classification gap was the messiest problem. RAILS entries include structured fields for order type, requirements, and who the order applies to. Ropes & Gray entries have summaries but lack that taxonomy. Claude Haiku — a lightweight Anthropic model optimized for high-volume, low-cost tasks — classified the R&G-only entries into RAILS’s categories. Classic Level 3 ad hoc tooling: the classification rules are too fuzzy for a regex but constrained enough that a budget model handles them reliably.

Judge name normalization was a separate cleanup pass. The two trackers spelled the same judge’s name differently — missing middle initials, “Judge” versus “Magistrate Judge,” accent marks, typos. Twenty-two groups of duplicates were identified and merged, updating 27 entries.

The explorer itself is a single-file web application: ~900 lines of HTML, CSS, and JavaScript. No framework. MiniSearch handles fuzzy text matching. The SVG map uses hardcoded state paths. Plotly powers the charts.

The full build walkthrough — including the CourtListener rate-limit fight and the data cleanup we should have done first — is in the companion post

What It Doesn’t Do#

The explorer is a search and filtering tool, not a legal research platform. It doesn’t analyze case law, provide a citator, or offer legal advice about what any particular order requires. It’s a snapshot — new orders arrive weekly, and the dataset will fall behind unless updated.

It also doesn’t replace reading the actual order. Summaries and category labels are useful for triage. They’re not substitutes for the text of a standing order your client’s filing must comply with. Always verify current requirements directly with the court.

The explorer is free and live at legalhack.io/data/ai-court-orders. Interactive charts are at legalhack.io/data/charts. The full data pipeline is on GitHub.

Access to basic compliance information shouldn’t require a Westlaw subscription.

Further Reading#

- RAILS AI Orders Resource. Duke Law’s tracker and taxonomy (last updated May 2025).

- Ropes & Gray AI Court Order Tracker. The actively maintained BigLaw tracker.

- CourtListener. Free Law Project’s open legal research platform.

- The AI Sanction Wave: $145K in Q1 Penalties. ComplexDiscovery’s analysis of Q1 2026 sanctions.

- Northwestern University Federal Judge AI Survey. The Sedona Conference Journal study of 502 federal judges’ AI use.

- ABA Formal Opinion 512. The ABA’s 2024 guidance on lawyers’ duties when using AI.

- Court AI Disclosure Requirements: A Tracker. Tracelaw’s summary of federal and state disclosure orders (updated March 2026).

- Data Pipeline on GitHub. Open-source scripts for cleaning, merging, enriching, and link replacement.

This is a standalone post on LegalRealist AI. It is intended for informational and educational purposes only and does not constitute legal advice. Court orders and local rules described here reflect publicly available information as of the publication date. New orders are issued weekly. Always verify current standing orders directly with the relevant court before filing. Laws and ethics rules governing AI use in legal practice vary by jurisdiction.