TL;DR

- Katyal’s thesis is right. His evidence undermined it. He toggled between “Harvey was our sparring partner, not a God” and “Harvey glimpsed that narrow door.” The overclaiming is where the cringe lives — not the use case.

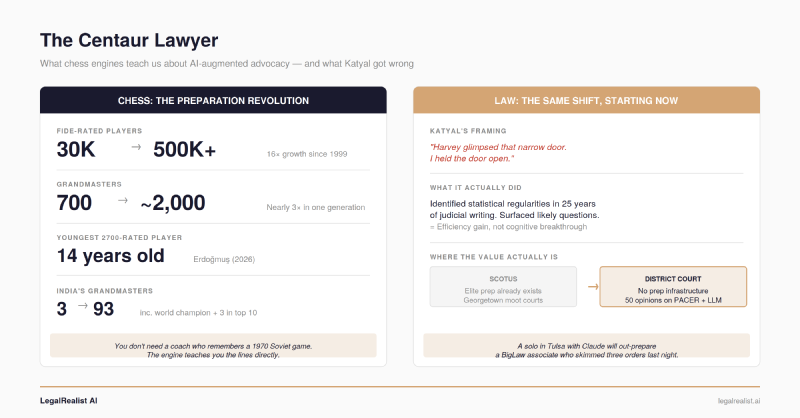

- The SCOTUS bar already does this manually. The real value is everywhere else. Elite advocates have studied individual justices obsessively for decades. The transformation happens at the district court level, where a solo practitioner can now analyze a judge’s last 50 rulings before a motion hearing.

- Chess engines didn’t replace grandmasters — they democratized preparation. When FIDE began tracking players in 1999, there were 30,000 rated players. Today there are over 500,000. The floor rose because engines eliminated the need for elite coaching institutions.

- You don’t need Harvey or a Supreme Court case to start. Any litigator can run a judge’s recent opinions through an LLM before oral argument — and should.

Recently, Neal Katyal — former acting Solicitor General, Milbank partner, and one of the most prominent Supreme Court advocates alive — released a TED Talk about winning the tariffs case. Within 48 hours, roughly 70% of David Lat’s readers rated it negatively. An anonymous member of the Supreme Court bar told Advisory Opinions that Katyal had “just announced his retirement in the form of a TED Talk.” Josh Blackman published a 4,800-word takedown. The ABA Journal, Bloomberg Law, and National Review all piled on.

Most of the backlash focused on Katyal’s self-congratulation — the personal credit-taking, the shot at a co-counsel, the TED packaging of a statutory interpretation case. That’s valid, but it’s a sideshow. The real problem with the talk is what Katyal said about AI.

In chess, a human who plays alongside an engine is called a centaur. Katyal described himself as one. He got the concept right and the credit wrong.

What Katyal Actually Did#

Katyal’s team worked with Harvey to build a custom AI instance they called Harvey Moot. It was trained on every question asked by a Supreme Court justice in oral argument over the past 25 years, plus every opinion, concurrence, and dissent those justices wrote. The goal wasn’t to generate legal arguments. It was to predict what the justices would ask — and how specific justices were likely to reason about specific issues.

That’s a reasonable use case. It’s also not the one Katyal presented on stage.

In the talk, Katyal toggled between two incompatible framings. The first was measured: Harvey was “our sparring partner, brilliant, tireless, occasionally insufferable, but not a God.” If he’d “just parroted Harvey’s output,” he said, he “would have lost the case 10-0, and there aren’t even 10 justices.” AI can analyze, AI can predict, “but the one thing AI can’t do is the thing that actually won that argument — connect.”

The second framing was not measured. TED talks reward narrative simplification, and some anthropomorphization is the genre’s convention — but Katyal is a lawyer speaking about law, and the SCOTUS bar heard his claims at face value. Harvey didn’t just surface likely topics — it “predicted the contours of the very argument I would face.” It didn’t flag that Justice Barrett might raise refund concerns — it “nailed” her worry. It didn’t identify a possible line of reasoning for Chief Justice Roberts — it “predicted a possible escape route,” and then: “Harvey glimpsed that narrow door. I held the door open. The Chief Justice walked through it.” Harvey “predicted Justice Gorsuch’s separate opinion, striking down the tariffs, almost verbatim.”

That language anthropomorphizes a pattern-matching tool into a strategic actor. Harvey didn’t “glimpse” anything. It identified statistical regularities in 25 years of judicial writing and surfaced likely question topics — some of which, as Blackman noted, any experienced moot court partner would flag in five minutes. The case was about the taxing power. Predicting that Gorsuch would ask about the taxing power is not artificial intelligence — it’s reading the cert petition. Sarah Isgur found apparent discrepancies between Katyal’s account of what Harvey predicted and what the oral argument transcript actually shows.

Experienced Supreme Court advocates have been doing this preparation manually for decades. The SCOTUS bar is a small, specialized community where practitioners study individual justices obsessively — reading every opinion in the relevant doctrinal area, tracking questioning patterns across terms, running moot courts staffed by former clerks who role-play specific justices. Georgetown’s Supreme Court Institute has run moot courts for virtually every argued case for over 20 years. The data Katyal fed into Harvey — 25 years of oral argument transcripts and written opinions — is publicly available. What Harvey Moot did was process that data faster than a human team could read it. That’s an efficiency gain on a method the SCOTUS bar already mastered.

Where the Value Actually Is#

The real value isn’t at the Supreme Court, where elite preparation infrastructure already exists. It’s at the district court level, where it doesn’t. A solo practitioner arguing a discovery motion in the Eastern District of Texas doesn’t have Georgetown’s moot court or a network of former clerks. But that judge has hundreds of opinions on PACER, years of docket entries showing how she rules on motions to compel, and questioning patterns at oral argument that nobody has ever systematically analyzed — because nobody had the tools or the budget to justify it for a single motion. An LLM changes that math. Feed a district judge’s last 50 rulings on summary judgment into Claude, and you get a preparation advantage that was previously available only to advocates arguing before nine justices with lifetime paper trails. Katyal demonstrated the technique at the top of the pyramid, where it matters least. The transformation happens at the base, where most lawyers actually practice.

Harvey itself was more careful than Katyal. In a blog post, the company described the project as “a small part of their intense preparation” and productized it as Harvey Moot for law school moot court training. Harvey confirmed Katyal holds no equity stake in the company and received no discounts — the overclaiming is about framing, not financial incentives.

The Chess Engine Precedent#

A chess engine doesn’t “glimpse” openings or “nail” an opponent’s strategy. It evaluates positions, calculates lines, and surfaces the results. The human decides what to do with them. When Magnus Carlsen prepares for a world championship match, he doesn’t say Stockfish “predicted his opponent’s plan.” He says he used the engine to study his opponent’s repertoire — and then he showed up at the board and played.

That distinction — between what the tool does and how you talk about the tool — is precisely what Katyal got wrong. What he described doing mirrors how chess engines changed competitive preparation. What he said about it on stage sounded like the engine won the case.

Today, every serious competitive player uses engines to analyze their upcoming opponent’s repertoire, stress-test opening novelties, and identify weaknesses in specific lines. Carlsen doesn’t play like Stockfish at the board. But he prepares with it, and shows up knowing his opponent’s tendencies with a precision that human study alone could never achieve. The engines didn’t replace the human skills that win chess games — creativity under time pressure, psychological reading of the opponent, navigating ambiguous positions where calculation alone doesn’t resolve the question. They compressed and supercharged the preparation that puts the player in position to deploy those skills.

The numbers tell the democratization story. When FIDE began tracking players by ID in 1999, there were roughly 30,000 rated players and about 700 grandmasters worldwide. Today there are over 500,000 rated players and nearly 2,000 grandmasters — the competitive base grew sixteenfold in a single generation. India had three grandmasters in 1999; it now has 93, including the reigning world champion, and three players in the global top ten. Recently, a 14-year-old Turkish player broke the record for youngest to reach a 2700 rating — a threshold that only 31 players in the world currently exceed. Engines didn’t just make the best players better. They eliminated the need to memorize thousands of historical games or train under a coach who remembered them — you learn the lines by playing against optimal responses, and the engine corrects you in real time. A teenager in Istanbul with Stockfish on a laptop learns better lines than a Soviet prodigy got from a state-funded academy in 1985.

Something similar is coming for law. A solo practitioner in Tulsa who feeds a judge’s last 50 opinions into an LLM will walk into oral argument better prepared than a BigLaw associate who skimmed three recent orders the night before — not because the solo is smarter, but because she used the tool and the associate didn’t. The floor rose in chess. It’s about to rise in law.

Kasparov — who lost to Deep Blue in 1997 and then pioneered “centaur chess” in 1998 — articulated the key insight: the combination of human intuition and machine calculation produced a level of play exceeding either alone. In a 2005 freestyle tournament, two amateurs with three computers beat teams of grandmasters with engines. The amateurs’ edge wasn’t chess knowledge — it was the quality of their process for integrating machine output into human decisions. (Chess is a perfect-information game; litigation, with its hidden evidence and adversarial misdirection, is closer to poker — where solvers like PioSOLVER transformed preparation on the same principle. The chess analogy is cleaner, but the poker parallel captures the incomplete-information reality of advocacy.)

Level 1 and Why That Matters#

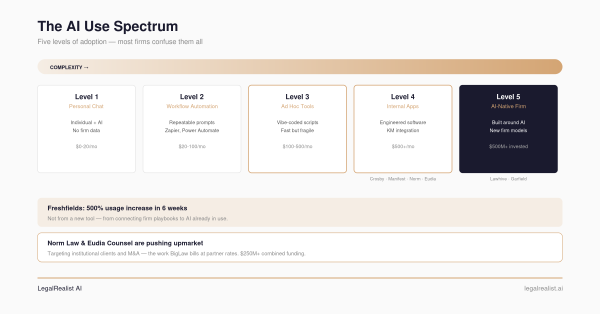

On the AI use spectrum, Katyal’s Harvey Moot is Level 1: Personal Enhancement. A senior professional used an AI tool individually, evaluated every output with expert judgment, and applied it in a context — oral argument — where only the human performs. No workflow automation. No institutional pipeline. No unsupervised AI output reaching a client or a court.

That’s not a demotion. Level 1 is where the most consequential AI use in law is happening right now — and it’s almost entirely invisible to firm management. The litigation partner prepping depositions with Claude. The transactional associate running a contract through an LLM before the partner review. The appellate lawyer asking an AI to steelman the opposing brief’s best arguments.

Katyal had a custom Harvey instance. Most lawyers won’t. But the gap between “bespoke Harvey Moot” and “paste a judge’s opinions into Claude” is narrower than the TED-talk packaging suggests — the same way the gap between Kasparov’s proprietary databases and free Stockfish closed within a decade. Harvey Moot is the expensive-hardware phase. The free-Stockfish phase is already here — it’s called a chat window.

What You Can Do Monday Morning#

You don’t need a custom Harvey instance or a Supreme Court case. The technique — modeling decision-maker behavior from documented history — works at every level of practice, and it’s most valuable where elite preparation infrastructure doesn’t already exist.

- Before oral argument: Pull a district judge’s last 20 opinions in your case type from PACER. Feed them into Claude or

GPT-4. Ask: what questions does this judge typically ask? What reasoning patterns recur? What concerns signal where this judge’s thinking is headed? - Before a deposition: Run opposing counsel’s motion practice from the current case through an LLM. Ask: what are the strongest arguments they haven’t made yet? Where are the gaps in their theory?

- Before negotiation: Upload the counterparty’s last three deals (if available from public filings or your own records). Ask: what terms did they fight hardest for? Where did they concede?

This is preparation, not practice. The AI doesn’t argue, depose, or negotiate. You do.

Katyal’s mistake wasn’t using AI to prepare for the most important argument of his career. It was describing an efficiency gain as a cognitive breakthrough, and anthropomorphizing a pattern-matching tool into a strategic partner that “glimpsed” doors and “nailed” predictions. The lawyers who get the most from AI will use it the way Carlsen uses Stockfish — as infrastructure, not as a character in the story.

Further Reading#

- Neal Katyal TED Talk: What Really Won the Trillion-Dollar Supreme Court Case. The talk itself.

- Supremely Cringe: Neal Katyal and ‘TED-Gate’. David Lat’s reporting and Katyal’s response.

- Katyal’s Boast of AI Role in Tariff Win Draws Swift Blowback. Bloomberg Law’s coverage.

- Let’s Talk About Neal Katyal’s TED Talk. Josh Blackman’s close reading on The Volokh Conspiracy.

- The Supreme Case for Harvey. Harvey AI’s own account of the Harvey Moot project.

- The TED Talk Heard ‘Round the World. Sarah Isgur and David French on Advisory Opinions.

- Chess Statistics Today. ChessBase’s 2025 analysis of GM growth and Elo trends.

- Advanced Chess (Wikipedia). History of centaur and freestyle chess.

- Modeling the Centaur: Human-Machine Synergy in Sequential Decision Making. 2024 research on human-machine chess collaboration.

- The AI Use Spectrum. Our framework for the five levels of legal AI adoption.

This post is part of the AI Adoption Strategy series on LegalRealist AI. It is intended for informational and educational purposes only and does not constitute legal advice. AI capabilities and features described here reflect publicly available information as of the publication date and are subject to rapid change. Laws and ethics rules governing AI use in legal practice vary by jurisdiction.