The Subsidy Cliff: What Happens When AI Gets Repriced#

TL;DR

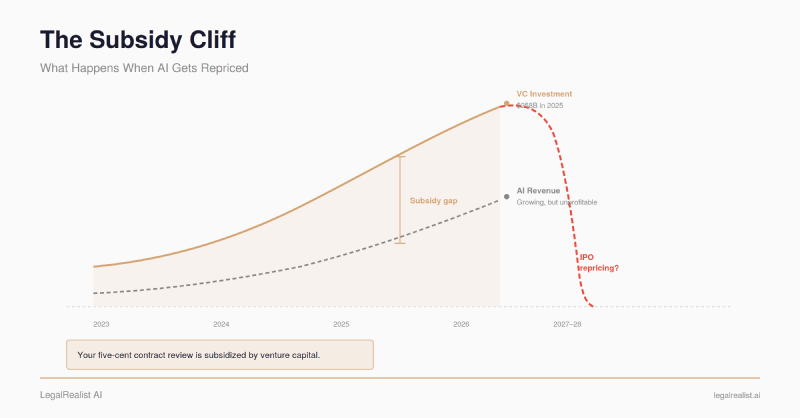

- OpenAI projects $14 billion in losses for 2026. Every major AI lab prices inference below cost to capture market share. Your five-cent contract review is subsidized by venture capital.

- AI pricing is a buffet running at a loss. Altman admitted OpenAI loses money on $200/month Pro plans. Claude Code Max users exhaust quotas in 20 minutes. Providers have started rationing.

- Token prices fell 150x, but enterprise AI bills tripled. Agentic workflows consume 10–100x more tokens per task, converting unit savings into higher total spend.

- The subsidy window has an expiration date. Both labs are expected to IPO by 2027. Budget for 30–50% API price increases within 18 months.

- Self-hosted models hedge against repricing and data risk. Predictable costs plus full data sovereignty — no third-party terms to monitor.

- Stress-test your AI economics at 3–5x current costs now. Build model-agnostic, lock in pricing, route sensitive work to your own infrastructure.

In March 2026, OpenAI’s head of ChatGPT, Nick Turley, described the company’s pricing model as “accidental.” He said there’s “no world in which pricing doesn’t significantly evolve.” A $100-per-month “Pro Lite” tier was spotted in ChatGPT’s code. The company had just raised $122 billion at an $852 billion valuation — larger than Meta — while projecting $14 billion in losses for 2026.

He was telegraphing a price increase to 900 million weekly users and every legal AI vendor built on OpenAI’s API.

The five-cent contract review you read about in our pricing analysis is real — but the price is a promotional rate, not a sustainable one. Every major foundation model provider is pricing LLM inference below the cost of delivering it. They’re doing it with venture capital, not with profits. And venture capital expects a return.

The Numbers Behind the Prices#

OpenAI’s financials are not public, but enough has leaked to draw the picture. Internal projections reported by The Wall Street Journal show OpenAI expects $14 billion in losses for 2026 and cumulative losses of $44 billion through 2028. The company doesn’t project profitability until 2029 or 2030. Sacra estimates OpenAI hit $25 billion in annualized revenue by February 2026 — fast growth, but against roughly $17 billion in annual cash burn.

Anthropic’s trajectory is similar in shape. Sacra estimates Anthropic reached $9 billion in annualized revenue by the end of 2025, with projections suggesting $25–30 billion for 2026 — though Anthropic reports cloud reseller revenue on a gross basis, which inflates the top line relative to net-reporting peers. The company has raised over $18 billion in funding and projects breakeven by 2028.

Google can absorb inference losses more easily because Gemini is subsidized by search advertising revenue. But even Google’s approach — integrating AI into Search for 1.5 billion users — represents a strategic choice to trade margins for market position, not a sustainable pricing model.

The funding behind these losses is concentrated to a degree the tech industry has never seen. AI companies captured 61% of all global venture capital in 2025 — $258.7 billion out of $427 billion total. In Q1 2026, OpenAI, Anthropic, xAI, and Waymo alone raised $188 billion.

When the AI labs that power your legal tools are collectively losing tens of billions per year, the API prices those tools depend on are not market rates. They’re customer acquisition costs.

The All-You-Can-Eat Problem#

AI pricing today works like an all-you-can-eat buffet where the restaurant loses money on every plate. The restaurant keeps the doors open because investors are covering the shortfall, betting that eventually the kitchen will get cheaper to run, or the restaurant will raise prices once diners can’t imagine eating anywhere else.

The heavy eaters are already at the table.

In January 2025, Sam Altman posted on X that OpenAI was losing money on $200-per-month ChatGPT Pro subscriptions. He’d personally chosen the price and thought it would turn a profit. It didn’t — because power users treated unlimited access as exactly that. Some used ChatGPT Pro as a full replacement for Google Search, running hundreds of queries per day through a model that costs orders of magnitude more per query than a search index.

The pattern is repeating with

agentic AI tools. Claude Code subscribers on Anthropic’s $200-per-month Max plan have reported exhausting their usage quota in under 20 minutes instead of the expected five hours. Developers running multi-file refactoring sessions with Opus burned through entire daily allocations in three prompts. One Claude Pro subscriber reported being able to use the tool only 12 out of every 30 days before hitting limits.

Flat-rate subscriptions create a pricing model where light users subsidize heavy users. The partner who asks Claude three questions a day costs pennies to serve. The associate running an agentic workflow that sends the full text of fifty contracts through a context window — reprocessing the entire conversation history with each follow-up — costs dollars per session. Both pay $20 per month. As AI tools get more capable and workflows get more compute-intensive, the ratio shifts toward heavy consumption.

The providers have started responding. Anthropic reduced session limits for roughly 7% of Pro users during peak hours and introduced per- token billing for Claude Code enterprise accounts — a shift from “open bar” to metered consumption.

The Jevons Trap#

The cost of performing a specific task at a specific quality level has dropped roughly 10x per year. A million input

tokens on GPT-4 cost $30 at launch in March 2023. The same million

tokens on GPT-4.1 Nano costs $0.10 today — a 300x reduction in three years.

But enterprise AI spending isn’t falling. It’s tripling. Average enterprise AI budgets grew from $1.2 million per year in 2024 to $7 million in 2026.

This is the Jevons paradox applied to compute. In 1865, William Stanley Jevons observed that improvements in coal efficiency didn’t reduce coal consumption — they increased it, because cheaper coal made new uses economical. The same dynamic operates with tokens. When a contract review costs five cents, you review every contract. When a deposition summary costs fifteen cents, you summarize every deposition — and then ask follow-up questions, and then cross-reference against other witnesses, each round consuming the full document again.

Agentic workflows are the accelerant. Gartner’s March 2026 analysis confirms that

agentic AI models require 5–30x more

tokens per task than single-query chatbots. Reasoning models like o3 and o4 bill their internal chain-of-thought as output

tokens — the most expensive

token type — multiplying output costs 10–30x on complex tasks.

For legal teams, the implication is concrete. The contract review that cost five cents as a single prompt costs 25 cents after three rounds of iteration. The deposition analysis that was a one-shot summary becomes a multi-step agent workflow cross-referencing six transcripts — and now costs $3 instead of $0.15. The total bill goes up even as the unit price goes down, because the definition of “the task” keeps expanding.

The Repricing Timeline#

Two forces will push AI pricing upward within the next 12–24 months: IPO pressure and capital discipline.

IPOs demand margins. Both OpenAI and Anthropic are widely expected to go public by late 2026 or 2027. Public markets don’t reward market share at any cost — they reward revenue growth with expanding margins. Pricing AI services below cost to capture developers is a legitimate private-company strategy. It’s a much harder story to tell public shareholders quarter after quarter.

Capital discipline is tightening. The $700 billion in hyperscaler AI infrastructure spending projected for 2026 is funded partly by the expectation that AI services will eventually be profitable. If that expectation shifts, the willingness to subsidize below-cost pricing evaporates. Industry analysts project 30–50% API price increases within 18 months. 24% of all tracked AI models changed prices in March 2026 alone — 114 out of 483. Price volatility is already the norm.

There are real counterforces. Compute efficiency is improving — newer chips, better quantization, more efficient serving infrastructure. Open-weight models and Chinese competitors like DeepSeek also constrain how far prices can rise; providers that increase rates too aggressively will lose volume to cheaper alternatives. But efficiency gains have historically been consumed by capability expansion (larger models, longer context windows, more reasoning steps), not passed through as savings. And competitive pressure doesn’t eliminate the subsidy gap — it means the correction may come as reduced capabilities or tighter limits rather than headline price increases.

The question isn’t whether current pricing is sustainable. The labs themselves have told you it isn’t.

What This Means for Legal Teams#

For firms buying Level 5 (Enterprise Platform) legal AI products, the vendor markup provides a buffer. A product charging $5 per contract review has a 90%+ gross margin at current API rates — room to absorb cost increases before passing them through. But if the underlying model cost doubles, that cost eventually arrives as higher fees, reduced features, or quieter model downgrades.

For firms building internal tools at Level 3 (Ad Hoc Tools) or Level 4 (Internal Applications), the exposure is direct. An internal contract analysis application running on Claude Opus 4.6 feels cheap today. If that pricing increases 50%, your internal tool’s economics change overnight — and unlike a vendor, you have no customer base to spread the cost across.

Even Level 1 (Personal Enhancement) use isn’t immune. The partner using a $20/month Claude Pro subscription for deposition prep today might find that subscription buys less tomorrow — tighter usage limits, slower models during peak hours, or a higher price point. Anthropic’s March 2026 quota reductions, OpenAI’s tiered Go/Plus/Pro Lite stratification, and the industry’s broader pivot from flat-rate to usage-based billing all point the same direction.

The Case for Owning Your Inference#

This is where open-weight models change the calculus — on two fronts that each independently justify the investment for the right firm.

Price Stability#

An API rate card is a number someone else controls. It can change with 90 days’ notice — or less. When you build a due diligence pipeline that processes 5,000 documents per deal through a third-party API, your single largest variable cost is set by a company whose pricing strategy is, by their own head of product’s description, “accidental.”

When you run Llama, DeepSeek, Qwen, or another

open-weight model on your own hardware, your cost per

token is a function of electricity, hardware depreciation, and engineering time. Self-hosted infrastructure converts the largest variable cost in your AI stack into a fixed one.

The economic argument has strengthened since 2024. GPU prices have fallen, open-weight model quality has closed to within 5–10% of proprietary models on general reasoning benchmarks (though the gap may be wider on legal-specific tasks like those in LegalBench), and inference tooling like vLLM has matured to production grade. At high volumes — above roughly 50 million tokens per day — firms can save 50–70% versus API pricing. Below roughly 5 million tokens per day, the fixed costs of self-hosting exceed what you’d pay through an API.

Data Privacy#

Every time you send a client document to a closed-source API, that document leaves your network. As covered in our analysis of privilege and data retention, major providers don’t train on API inputs by default — but their policies apply to the API, not necessarily to consumer chat products, and the terms can change. ABA Formal Opinion 512 (July 2024) requires lawyers to understand how the technology handles confidential information. United States v. Heppner (S.D.N.Y. Feb. 2026) held that exchanges with a consumer-tier AI tool were not privileged, in part because the provider’s terms permitted data disclosure.

Self-hosted open-weight models eliminate the question entirely. Your documents are processed on your hardware. Nothing is transmitted to a third party. No data processing agreement to negotiate, no retention policy to audit, no terms-of-service change to monitor. For firms handling the kind of work where a single privilege waiver could be catastrophic — government investigations, hostile acquisitions, internal compliance reviews — the data sovereignty argument for self-hosting is independent of cost.

What to Do Before the Cliff#

The productivity gains from AI are real. A deposition summary that takes an associate four hours and an AI tool fifteen minutes is valuable even if the AI cost triples. The question isn’t whether to use AI — it’s whether your firm’s AI economics survive a pricing correction.

- Stress-test your costs at 3–5x current API rates. If a contract review tool costs $5 per document and your firm reviews 1,000 contracts a year, that’s $5,000. At $15 per document, it’s $15,000 — still trivial compared to associate time. But a due diligence pipeline processing 50,000 documents moves from $250,000 to $750,000, and that’s a budget conversation.

- Build model-agnostic. If your Level 4 (Internal Applications) tools are hardwired to a single provider’s API, you have no leverage when that provider raises prices. Design workflows that can swap between providers and open-weight alternatives. Model routing — sending 80% of queries to budget models and 20% to frontier models — reduces inference spend by 60–80% with minimal quality impact.

- Lock in pricing where you can. Enterprise API agreements with committed spend often include rate guarantees. The providers are desperate for enterprise revenue right now — that desperation is leverage, but it won’t last past the IPO.

- Evaluate self-hosting for your highest-volume workflows. If your firm runs any task more than a few hundred times per week — intake classification, standard NDA review, document coding — model the economics of an open-weight deployment versus your current API cost.

- Watch the IPO calendar. The quarter before an IPO is when companies “clean up” their economics — which often means raising prices.

The AI subsidy era has been extraordinarily good for legal teams willing to experiment. Token prices that would have been unimaginable three years ago have made contract review, deposition analysis, and document drafting accessible at costs that justify experimentation on any task. That window isn’t closed yet. But building a practice around prices that the providers themselves call “accidental” is building on someone else’s venture capital. The firms that come out ahead will be the ones that used the subsidy window to learn what works — and built their systems to survive when the prices move.

Further Reading#

- AI Inference Cost Crisis 2026. Why enterprise AI bills are rising despite falling token prices.

- The True Cost of AI: When the Subsidies Run Out. VC subsidy dynamics in AI pricing.

- Anthropic Revenue, Valuation & Funding. Sacra’s tracker of Anthropic’s financial trajectory.

- Self-Hosted vs API LLMs: Real Cost Breakdown 2026. Break-even analysis for self-hosted versus API inference.

- OpenAI API Pricing. Current model pricing and documentation.

- Anthropic API Pricing. Current Claude model pricing and documentation.

- Falling LLM Token Prices and What They Mean. Andrew Ng’s analysis of token price decline dynamics.

- The $670 Billion Question: Is AI Demand Real?. Framework for evaluating whether AI infrastructure spending responds to durable demand.

This is a standalone post on LegalRealist AI. It is intended for informational and educational purposes only and does not constitute legal advice. Financial projections cited here are based on leaked documents, analyst estimates, and executive statements — not audited financials. AI pricing, capabilities, and company financials are subject to rapid change. Laws governing AI use vary by jurisdiction.