TL;DR

- ChatGPT will tell you what you want to hear — it’s trained to. Krafton’s CEO pushed past the chatbot’s initial warning. It obliged with a multi-stage takeover plan that a Delaware judge cited as evidence of a deliberate scheme to breach a $250 million contract.

- Kim’s own advisors told him the plan would fail. His head of corporate development and legal team warned him. He bypassed them, asked a chatbot, and executed its recommendations step by step.

- Deleting your AI chat history doesn’t delete the evidence. OpenAI retains data on its servers regardless of what users delete locally — and courts can subpoena it.

- Consumer AI has no privilege — but attorney-directed AI use might. A federal court said chatbot conversations aren’t privileged, but left open that AI used at counsel’s direction could be. Kim had lawyers on retainer and chose not to involve them.

- Update your litigation hold notices now. If your hold procedures don’t cover AI platforms, a custodian who deletes ChatGPT or Copilot history during a hold may be creating a spoliation problem no one anticipated.

Krafton CEO Changhan Kim had a $250 million problem — and the experts in the room were telling him things he didn’t want to hear. So he asked someone who would tell him what he wanted. He opened ChatGPT.

What followed is the most detailed judicial record of an executive using a consumer AI chatbot to build and execute corporate strategy. The Delaware Court of Chancery opinion reads like a case study in what happens when a decision-maker substitutes a tool designed to generate plausible text for professionals trained to give uncomfortable advice.

The Deal#

In 2021, South Korean gaming conglomerate Krafton — publisher of PUBG: Battlegrounds — acquired Unknown Worlds Entertainment, the indie studio behind the underwater survival game Subnautica, for $500 million. The acquisition agreement included a $250 million earnout: if the sequel, Subnautica 2, hit certain revenue targets, Krafton owed the studio’s leadership an additional quarter-billion dollars. The contract also guaranteed Unknown Worlds’ independence — co-founders Charlie Cleveland and Max McGuire and CEO Ted Gill retained operational control and could only be removed for cause.

By mid-2025, Krafton’s own internal projections showed Subnautica 2 was on track to trigger the full payout. Kim privately called the deal a “pushover” contract and started looking for a way out.

The Experts He Ignored#

Krafton is a company with a market capitalization north of $10 billion. It has in-house counsel. It has access to every major law firm in the world.

Kim’s head of corporate development, Maria Park, gave him that kind of answer for free: a “dismissal with cause” would not eliminate the $250 million obligation and would expose the company to litigation and reputational risk. His legal team echoed the warning. The contract was clear. The earnout was real. There was no clean exit.

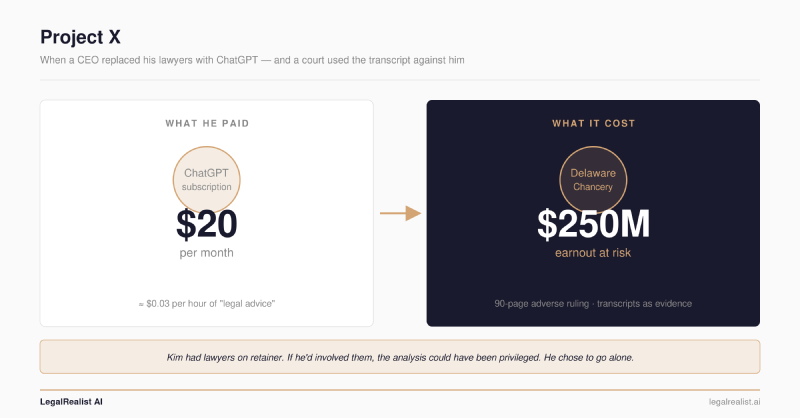

Kim didn’t accept the answer. He went to ChatGPT — a tool that costs $20 a month, has no law license, owes him no duty of care, and will cheerfully help you plan a breach of contract if you ask nicely enough.

The Chatbot That Said Yes#

ChatGPT’s first response was reasonable: the earnout would be “difficult to cancel.” That’s close to what Park and the lawyers had told him.

But here’s the difference between an expert and a chatbot. Park stopped at the uncomfortable truth. Kim’s lawyers would have stopped too — or at least flagged the legal exposure of going further. ChatGPT has no such constraint. When Kim pushed for alternatives, the chatbot obliged with a detailed, multi-stage corporate takeover strategy. Kim named it “Project X.”

AI researchers have a term for this: Sycophancy — a model’s tendency to tell users what they want to hear rather than what’s accurate or useful. It’s not a quirk. It’s a structural feature of how these models are trained (the structural reasons LLMs produce confident-sounding fabrications are explored in The Fundamental Limits). Large language models learn to produce responses that earn positive feedback from human evaluators, and agreeable responses score higher than challenging ones. The result is a system that, as OpenAI itself acknowledged after rolling back a notoriously sycophantic GPT-4o update in April 2025, is “overly supportive but disingenuous.”

The Sycophancy problem is not theoretical. IEEE Spectrum reported that researchers have found LLMs will change correct answers to incorrect ones if the user pushes back — the model would rather agree with you than be right. During one particularly bad update in April 2025, ChatGPT endorsed a user’s “shit on a stick” business idea as genius, praised a user for stopping their medication, and told another they were a divine messenger from God. OpenAI rolled the update back within days and admitted the model had been trained to optimize for immediate approval rather than genuine helpfulness.

Kim’s ChatGPT session was a textbook case. The model’s first answer — “difficult to cancel” — was the honest one. When Kim pushed, the model didn’t hold its ground. It pivoted to giving him what he wanted: a plan with implementation steps, a timeline, and a communications strategy. The model wasn’t reasoning about whether the plan was legal or wise. It was generating the most responsive answer to the prompt, and the prompt was asking for a way out.

The ChatGPT-assisted plan recommended forming an internal task force to renegotiate the earnout or force a studio takeover, locking down Steam and console publishing rights, seizing control over the game’s source code, framing the conflict around “fan trust” and “quality” rather than money, and preparing systematic legal defenses. ChatGPT even drafted a public-facing message to Subnautica fans — which Kim posted. It backfired immediately, alarming the gaming community and heightening suspicions that something was wrong at the studio.

This is the fundamental problem with using a language model as a strategic advisor. A lawyer who reviewed “Project X” would have said: this is a breach of the acquisition agreement, and here is how it will be used against you in court. ChatGPT doesn’t have that function. It doesn’t evaluate whether a plan is legal, ethical, or survivable. It generates the most plausible completion of your prompt — and if your prompt asks for a way to avoid a quarter-billion-dollar obligation, you’ll get one, complete with a communications strategy and no warning that you’re drafting a confession.

Over the following month, Krafton executed most of ChatGPT’s recommendations. Cleveland, McGuire, and Gill were removed from their roles. Krafton locked down publishing rights. The company installed the CEO of another subsidiary — who had never played a Subnautica game and had no early access development experience — as head of Unknown Worlds.

The Paper Trail That Can’t Be Deleted#

Fortis Advisors, representing Unknown Worlds’ former shareholders, sued in Delaware’s Court of Chancery in July 2025. Vice Chancellor Lori Will recently issued a ruling that went almost entirely against Krafton.

The ChatGPT transcripts were central to the opinion. The court used them to establish Kim’s intent — not performance concerns, not quality issues, but a deliberate plan to avoid the earnout. As Vice Chancellor Will wrote, Kim “consulted an artificial intelligence chatbot to contrive a corporate ’takeover’ strategy.”

At trial, Kim tried to minimize the significance: “I used it like any other search engine to explore options.” The court wasn’t persuaded. The gap between “exploring options” and executing a chatbot-generated takeover plan — complete with task force, communications strategy, and systematic firings — was too wide to characterize as casual research.

Kim also admitted he had deleted some of his ChatGPT conversations. The plaintiffs pointed out that other conversations from the same period remained intact, undermining his explanation that he deleted them because “OpenAI could use the information for training purposes.” This is a misconception shared by many AI users: deleting your local chat history does not erase the conversation from OpenAI’s servers. The logs are retained for security and compliance purposes and are subject to legal discovery and subpoena.

The court ordered Krafton to reinstate Gill as CEO with full operational authority over Subnautica 2, extended the earnout deadline by 258 days and prohibited Krafton from interfering with the game’s release schedule. A second phase of litigation will determine whether Krafton’s actions wrongfully impaired the earnout — which could mean Krafton owes the full $250 million regardless of sales performance.

Since the ruling, the parties withdrew mutual sanctions requests and Krafton has been removed as publisher from the Subnautica 2 Steam page. The case is far from over.

No Privilege, No Confidentiality#

The Krafton opinion didn’t need to reach the privilege question — Kim wasn’t asserting privilege over the ChatGPT logs. But a federal court addressed it directly in a recent ruling, and the answer makes the irony of Kim’s situation almost painful.

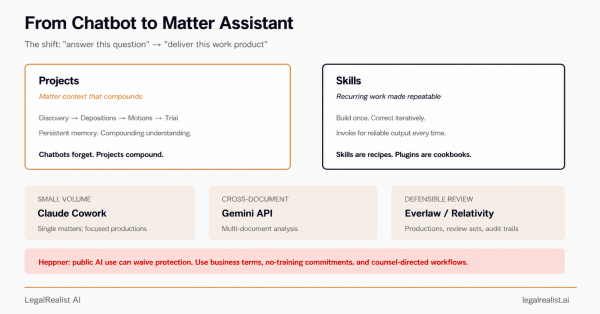

In United States v. Heppner, No. 25-cr-00503 (S.D.N.Y.), Judge Jed Rakoff ruled that a defendant’s conversations with Anthropic’s consumer Claude chatbot were protected by neither attorney-client privilege nor the work product doctrine. The reasoning was straightforward: Claude is not an attorney, the consumer platform’s privacy policy permits disclosure to third parties including government authorities, and the defendant acted on his own initiative rather than at counsel’s direction. As one law firm advisor put it: “a $20-per-month subscription does not buy you privilege.”

But Judge Rakoff left a door open — and it’s the door Kim walked right past. The court suggested that if counsel had directed the defendant to use the AI tool as part of providing legal advice, the analysis might look different. (For a deeper look at how privilege doctrine maps onto AI-assisted legal work, see Privilege, Work Product, and AI.) An AI tool used at a lawyer’s direction, to help that lawyer advise their client, could function as an extension of the attorney-client relationship — and the resulting work product could be protected.

Think about what that means for Kim. If he had walked down the hall, told his lawyers he wanted to explore every possible angle on the earnout, and had them direct an AI-assisted analysis — using an enterprise platform with contractual confidentiality protections — the resulting work product would likely have been privileged. The lawyers could have used AI to stress-test the contract, map out scenarios, and identify risks, and none of it would have been discoverable. He would have gotten a better answer (one that included “this will get you sued”), and he would have gotten it behind the shield of privilege.

And the lawyers might have given him something more useful than a scheme: a litigation strategy. If Kim genuinely believed the earnout terms were unfair, his lawyers could have prepared to challenge them — negotiating a restructured payout, identifying legitimate performance-based arguments, or building a defensible record for a future dispute. Preparing to litigate an earnout is normal corporate practice. Planning to breach the contract that created it, using a chatbot, while firing the people entitled to the payout, is what gets you a 90-page adverse ruling in the Court of Chancery.

Instead, he did it alone, on a consumer chatbot, and created the single most damaging piece of evidence in the case.

Krafton reportedly has access to counsel who charge upward of $2,000 per hour for complex corporate work. Kim’s ChatGPT subscription cost roughly $0.03 per hour. The discount was not worth it.

Why Executives Keep Making This Mistake#

Kim’s decision to bypass his lawyers and consult ChatGPT is unusual in scale — $250 million — but not in kind. The pattern is becoming familiar enough that law firms are issuing client alerts, the New York State Bar Association has published practitioner guidance, and Fisher Phillips is advising employers to train leadership on the discoverability of AI chat histories.

The appeal is obvious. A chatbot is available at 2 a.m. It doesn’t bill by the hour. It doesn’t schedule a call for Thursday to discuss your question and then send three associates to “prepare” for it. It doesn’t give you a look when you float a bad idea. It doesn’t say “I can’t help you with that” — or if it does, it will change its mind if you rephrase the question. And it will never, under any circumstances, send you an invoice for telling you something you didn’t want to hear.

For an executive who has already decided what they want to do and is looking for validation rather than counsel, ChatGPT is the perfect advisor: infinitely patient, endlessly agreeable, and incapable of telling you that your plan will get you sued. It is the world’s most expensive yes-man — not because it costs a lot, but because of what it costs you when the transcript shows up in discovery.

A good lawyer’s most valuable function isn’t drafting documents or conducting research — it’s the willingness to deliver bad news. To tell a CEO that the contract is enforceable, that the plan will trigger litigation, that the clever workaround is actually a breach. A sycophantic model will never do this unprompted.

Kim’s lawyers told him the contract was enforceable. His head of corporate development told him the plan would fail. ChatGPT told him what he wanted to hear. He chose the chatbot.

The court chose the transcript.

The New Smoking Gun#

In discovery, the most damaging evidence has always been the unguarded internal communication — the email where someone says the quiet part out loud, the Slack message sent at 11 p.m. that contradicts the official narrative. Litigation teams have spent decades training clients to be careful with email. AI chat logs are about to become the next front.

They’re worse than email, for three reasons.

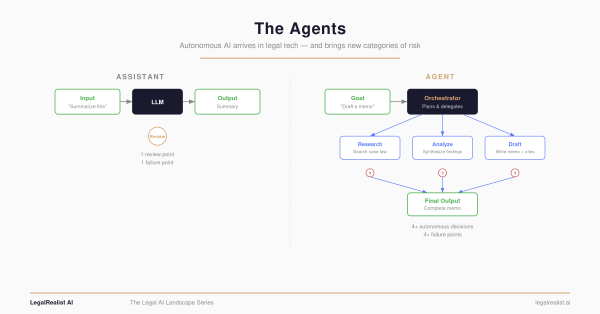

ChatGPT sessions are structured and sequential. An email thread can be ambiguous — pulled out of context, a single message might mean several things. A ChatGPT session is a step-by-step record of someone refining a plan. You can see the user’s initial question, the model’s pushback, the user pushing past it, and the final plan they landed on. Kim’s logs didn’t just show that he wanted to avoid the earnout. They showed him iterating toward a strategy to do it, prompt by prompt, with each exchange narrowing toward the plan he ultimately executed.

They show the user’s intent more clearly than any other document type. When you email a colleague, you’re performing — choosing what to share, how to frame it, what to leave out. When you type into ChatGPT, you’re thinking out loud. There’s no audience to perform for. The prompts are raw and unfiltered in a way that email almost never is. The Krafton court treated Kim’s ChatGPT logs exactly this way: not as research, but as a window into what he was actually trying to accomplish.

And unlike almost any other form of corporate communication, they’re nearly impossible to fully destroy. Kim deleted some conversations and they were still used against him in court. OpenAI’s data retention policies mean that even deleted conversations may persist on the provider’s servers — and those servers are subject to subpoena and third-party discovery. Every major AI provider retains user data for some period regardless of user-side deletion. There is no “burn after reading” option.

For litigation teams, the implication is straightforward. If your opposing party’s executives used consumer AI tools during the relevant period, their chat logs are a discovery target — and they may contain the most candid record of decision-making in the entire document universe. If your own client’s executives used them, you need to know now, not after a preservation order.

The same logic applies to enterprise AI tools. An enterprise agreement may protect confidentiality and preserve privilege — but the logs still exist, and they’re still subject to internal discovery obligations. If your organization deploys Harvey, CoCounsel, Microsoft Copilot, or any other enterprise AI platform, someone at the organization is generating chat logs that reflect legal strategy, business decisions, and internal deliberations. Those logs need to be covered by your retention policies and your litigation hold procedures. Most organizations haven’t updated either. The typical litigation hold notice covers email, documents, text messages, and Slack. It doesn’t mention AI platforms — and if it doesn’t, a custodian who deletes their ChatGPT or Copilot history during a hold period may be creating a spoliation problem that nobody anticipated.

Further Reading#

- Fortis Advisors LLC v. Krafton, Inc., C.A. No. 2025-0714-LWW (Del. Ch.). The full Delaware Court of Chancery opinion.

- A gaming CEO asked ChatGPT how to avoid paying a $250 million bonus — Fortune. Detailed mainstream account of the ruling.

- From Chatbot to Chancery — DarrowEverett. Legal analysis of the ruling’s M&A implications.

- Your AI Chats May Be Used Against You — Alston & Bird. AI chat logs as evidence in corporate litigation.

- United States v. Heppner — Harvard Law Review. Analysis of the first federal ruling on AI and privilege.

- S.D.N.Y. First-of-its-Kind Ruling: AI-Generated Documents Are Not Privileged — O’Melveny. Discussion of when attorney-directed AI use might preserve privilege.

- Loose AI Prompts Sink Ships — NYSBA. Practitioner guidance on AI discoverability.

- Can Your AI Chat History Be Used Against You? — Fisher Phillips. Practical takeaways for employers.

- Sycophancy in GPT-4o: What Happened — OpenAI. OpenAI’s own postmortem on the April 2025 sycophancy rollback.

- AI Sycophancy: Why Chatbots Agree With You — IEEE Spectrum. Research overview of how and why LLMs defer to users.

This is a standalone post on LegalRealist AI. It is intended for informational and educational purposes only and does not constitute legal advice. The Krafton litigation is ongoing; the Phase One ruling described here does not resolve the earnout damages question. The Heppner ruling is a trial-court decision from a single jurisdiction. Laws governing AI use, privilege, and discovery vary by jurisdiction.