TL;DR

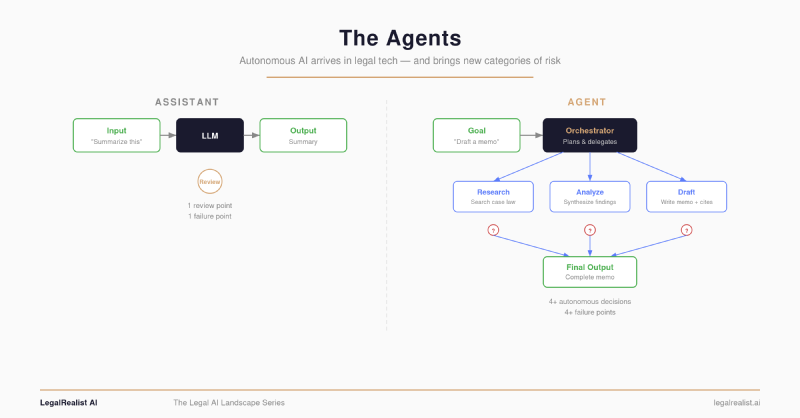

- Agentic AI doesn’t answer questions — it executes workflows. Agents make retrieval decisions, select tools, and sequence multi-step tasks without a human directing each step.

- Every major vendor has shipped agents. Harvey processes 400,000+ agentic queries daily. Thomson Reuters rebuilt CoCounsel on Anthropic’s Claude Agent SDK. LexisNexis deployed four specialized agents inside Protégé.

- Your ethical walls weren’t built for this. Existing conflicts systems assume a human is making each access decision. An agent processing hundreds of documents in minutes doesn’t ask permission at each retrieval step.

- Hallucination risk compounds across steps. A wrong retrieval call in step two means every subsequent step — analysis, drafting, citation — builds on that error.

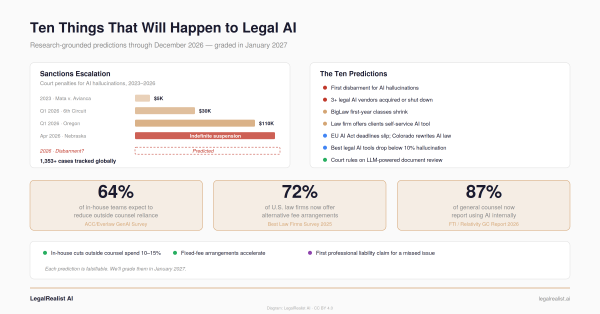

- The EU AI Act’s high-risk deadlines are August 2026, and legal AI is in scope. Colorado’s AI Act takes effect June 2026. The compliance window is months, not years.

- Ask four questions before you deploy. Auditability, failure modes, checkpoint triggers, and ethical wall integration.

At a recent Legalweek, a vendor demonstrated an AI agent that took a single litigation hold notice and autonomously identified custodians, mapped data sources, drafted preservation letters, and scheduled collection — all in under four minutes. The room went quiet. Not from awe. From unease. Everyone watching understood what they were seeing: not a faster tool, but a different kind of worker — one that doesn’t bill hours, doesn’t need training, and doesn’t forget steps.

The hype is everywhere. OpenClaw — an open-source AI agent framework created by Austrian developer Peter Steinberger — went from obscurity to 247,000+ GitHub stars in 60 days, making it one of the fastest-growing repositories in GitHub history. Nvidia’s Jensen Huang called it “the next ChatGPT.” OpenAI acquired the project in February 2026, bringing Steinberger in-house to build its next generation of personal agents — a signal that the company sees autonomous workflows, not chat, as the future of its product line. Meanwhile, Anthropic’s launch of Cowork — which featured AI agents for automating legal tasks like contract review and NDA triage — triggered a sell-off in legal-tech stocks that commentators dubbed the “SaaSpocalypse.” The message from the market was blunt: autonomous AI agents aren’t a feature update. They’re an architectural shift that threatens the business model of every tool that merely assists.

But the OpenClaw frenzy also previewed what happens without Guardrails. Security researchers found over 135,000 publicly exposed instances. A campaign dubbed “ClawHavoc” flooded the skills marketplace with over 800 malicious plugins. A misconfigured agent recursively wiped a production database. One of OpenClaw’s own maintainers warned on Discord: “if you can’t understand how to run a command line, this is far too dangerous of a project for you to use safely.” China restricted government agencies from running it entirely.

Legal AI agents aren’t OpenClaw. They’re purpose-built, vendor-managed, and designed for regulated environments. But they share the same underlying architecture — an LLM making autonomous decisions in a multi-step loop — and the same fundamental question: how much autonomy is safe when the work product carries professional liability?

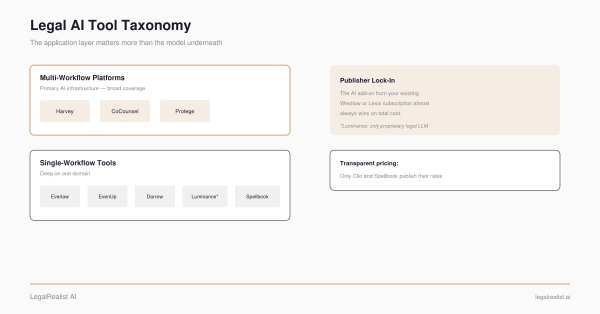

Every major legal AI vendor shipped something called “ agentic AI” recently: Thomson Reuters, LexisNexis, Harvey, LegalOn, and others. The term is already being used so loosely it risks meaning nothing. This post defines it, maps who built what, and identifies the new categories of risk that didn’t exist when AI was just answering questions.

From Assistants to Agents#

The legal AI tools profiled earlier in this series are assistants. You give them a task — summarize this deposition, flag the indemnification clause, research this statute — and they produce an output. One input, one output. You direct every step.

Agentic AI works differently. You give it a goal, and it plans and executes a multi-step workflow to reach that goal. A due diligence agent doesn’t just summarize one document; it searches a document set, builds a review table flagging material risks, identifies missing items, and generates a post-closing checklist. A litigation agent doesn’t just answer a research question; it decomposes the question into sub-queries, searches case law and statutes through different retrieval strategies, synthesizes findings, and drafts a memo with citations — pausing for human input only at defined checkpoints.

The architectural pattern is consistent across vendors: an orchestrator agent receives the goal, breaks it into subtasks, and delegates each subtask to specialized agents or tool calls. The orchestrator then synthesizes results and decides what to do next. Anthropic calls this the operator pattern. Harvey calls it Agent Builder. LexisNexis calls it agentic workflows. The naming varies; the structure doesn’t.

This is a meaningful shift. When you use an assistant, you see the input and the output. When you use an agent, the system makes dozens of intermediate decisions — which documents to retrieve, which tools to use, how to sequence the analysis, whether to search deeper or move on — that you never see unless the platform explicitly logs and surfaces them.

Who Shipped What#

Harvey#

Harvey launched Agent Builder in early 2026, enabling legal teams to create custom agents that handle multi-step tasks autonomously. The platform now processes over 400,000 agentic queries daily, with more than 25,000 custom agents operating across M&A, due diligence, contract drafting, and document review. Agent Builder evolves from Harvey’s earlier Workflow Builder with a critical difference: agents can reason through tasks dynamically rather than following predetermined steps. Harvey is also developing what it calls “long-horizon agents” — systems designed to operate over entire client matters like a team of associates. Internally, Harvey runs an autonomous agent called Spectre that increasingly operates without human prompts.

CoCounsel (Thomson Reuters)#

Thomson Reuters rebuilt CoCounsel on Anthropic’s Claude Agent SDK for its next-generation platform, announced recently. The new CoCounsel is described as a “unified agentic platform” that plans, selects tools, retrieves authoritative content from Westlaw and Practical Law, and adapts mid-workflow. Thomson Reuters calls it “fiduciary-grade AI” — framing the system as a senior associate that works independently, not a first-year waiting for the next instruction. The platform includes a patent-pending citation ledger architecture that creates a session-verifiable evidence trail for citations, ensuring the agent can only cite what it actually retrieved.

Protégé (LexisNexis)#

LexisNexis deployed four specialized agents inside Protégé: an Orchestrator Agent that decomposes complex queries, a Legal Research Agent that allows real-time user guidance, a Reflection Agent that reviews final responses, and a Shepard’s Citation Agent that checks legal citations in real time. The platform ships with hundreds of pre-built workflows spanning litigation, transactional, and everyday legal tasks — plus a custom Workflow Builder. Notably, LexisNexis has been more restrained in its “agent” language than competitors, emphasizing “automated workflows” and “teammate” framing over autonomy.

Others#

LegalOn launched five AI agents for in-house legal teams in February 2026, handling intake through drafting. Freshfields signed a multi-year agreement with Anthropic to co-develop legal agentic workflows, deploying Claude across 5,700 employees in 33 offices. A&O Shearman partnered with Harvey to deploy agentic agents for antitrust filing analysis, cybersecurity, fund formation, and loan review.

What Makes Agents Different and Riskier#

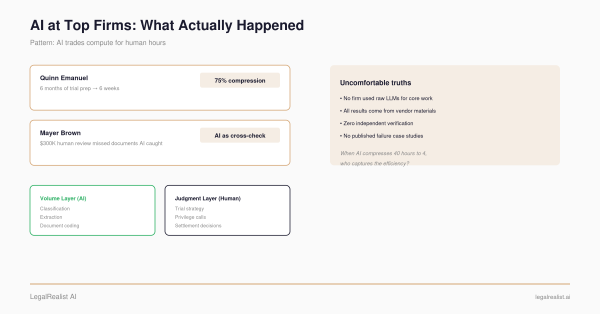

The risks of agentic legal AI are categorically different from the risks covered in The Fundamental Limits. Hallucination is still the baseline problem. But agents introduce three new risk categories that assistants don’t have.

The Ethical Wall Problem#

Harvey’s CEO Winston Weinberg calls this the “number one” concern of law firms — and Harvey published a detailed technical framework explaining why.

Most Am Law 200 firms manage information barriers through Intapp’s conflicts checking system, iManage or NetDocuments access controls, and measures like separate floors and restricted email groups. These work because the boundaries are clear: documents live in folders, people have access lists, and firms can restrict access at every point.

Agents break this model in three ways. First, agents access documents directly. When an AI autonomously pulls 50 documents from a firm’s document management system to review an acquisition agreement, it’s making retrieval decisions without human oversight. Second, agents accumulate context. Over a long session, an agent builds up a context window that may contain information from multiple sources — and unlike a human, it can’t “unsee” something. Third, agents work too fast to monitor manually. A junior associate reviews maybe 50 documents daily. An agent processes hundreds in minutes. The supervising partner sees outputs, not the thousands of intermediate steps that produced them.

Harvey’s response was to partner with Intapp to embed ethical wall enforcement directly into the AI platform, syncing existing Intapp Walls policies with Harvey’s access controls across Assistant, Vault, and Workflows. But Harvey’s own framework was blunt about the stakes: “A firm that deploys AI agents without auditable ethical wall enforcement is creating discoverable evidence of inadequate screening procedures.” Courts can disqualify entire firms from matters over ethical wall failures. The malpractice exposure makes the productivity gain worthless.

Hallucination Compounds Across Steps#

As covered in The Fundamental Limits, even the best RAG-based legal AI tools hallucinate 17-34% of the time on verifiable legal questions. With an assistant, the blast radius of a Hallucination is one output — a single memo, a single research answer. You review it, catch it, fix it.

With an agent, a Hallucination in an early step propagates. If the orchestrator retrieves the wrong case in step two, the analysis in step three builds on that error, and the draft in step four cites it as authority. The agent doesn’t know it’s wrong at step two, so it has no reason to course-correct at step three. The confident prose reads identically whether the underlying retrieval was accurate or fabricated — a pattern MIT researchers have documented.

The vendors with the strongest mitigation architectures are building verification into each step rather than only checking the final output. Thomson Reuters’ citation ledger creates a verifiable trail at each retrieval step. LexisNexis’s Reflection Agent reviews the final output before delivery. Harvey’s agents surface decisions and flag moments where user input would improve results before proceeding. But none of these eliminate the underlying problem: multi-step autonomy multiplies single-step risk.

The Auditability Gap#

The 2025 AI Agent Index — a study led by researchers from Cambridge, MIT, Harvard, Stanford, and other universities — systematically evaluated 30 deployed agentic AI systems and found that most developers share little information about safety, evaluations, and societal impacts. The study documented limited logging, inadequate disclosure of when systems are functioning as AI rather than human, and insufficient transparency about how agents make intermediate decisions.

For legal work, auditability isn’t a nice-to-have. It’s a professional obligation. When an agent makes a privilege determination — this document is not privileged, produce it — that decision needs to be traceable. Who (or what) made the call? What information did it have? What did it consider and reject? If opposing counsel challenges the production, can you reconstruct the decision chain?

No published court opinion has specifically addressed LLM-powered classification as a substitute for human first-pass review. Courts accepted technology-assisted review (TAR) in Da Silva Moore v. Publicis Groupe (2012) and Rio Tinto PLC v. Vale S.A. (2015) — but those opinions addressed predictive coding trained on attorney seed sets. Autonomous agents making privilege calls in real time are a different animal. When a court eventually rules on this, the firms with auditable decision trails will be in a fundamentally stronger position than those that can only show the final output.

The Regulatory Clock#

The regulatory landscape is tightening on a timeline that matters for anyone deploying agents now.

The EU AI Act reaches full application for high-risk AI systems August 2, 2026. Legal AI is explicitly in scope: “assistance in legal interpretation and application of the law” is listed as a high-risk category. Firms deploying AI agents in EU matters will need to comply with requirements around risk management, human oversight, transparency, and technical documentation. The European Commission’s Digital Omnibus proposal may push some deadlines to late 2027, but the direction is clear — and firms should prepare for the earlier date.

In the United States, the Colorado AI Act takes effect June 30, 2026, requiring developers and deployers of high-risk AI to undertake reasonable care to avoid algorithmic discrimination, develop risk management programs, and conduct impact assessments. New York’s RAISE Act was recently amended with penalties up to $1 million for a first violation. California’s CCPA-based automated decision-making regulations take effect January 2027. Meanwhile, the White House is pushing federal preemption of state AI laws, creating uncertainty about which rules will ultimately apply.

The common thread across every framework: human oversight, explainability, and auditability. Precisely the areas where autonomous agents create the most new risk.

The Human Oversight Paradox#

Here’s the tension at the center of agentic legal AI: the whole point of agents is to reduce human involvement in routine steps, but the professional obligation to supervise remains the same.

ABA Formal Opinion 512 (July 2024) requires lawyers to take reasonable measures when using AI. Courts have extended this to mean: your duty to verify citations, check reasoning, and ensure accuracy applies regardless of whether the work was done by an associate, a contract reviewer, or an AI system. As NPR reported, sanctions for AI-generated errors are accelerating, not slowing — and judges are referring attorneys to disciplinary bodies.

The vendors understand this. Harvey builds human-in-the-loop checkpoints where agents pause for user input at defined moments. Protégé uses a Reflection Agent that reviews its own output before delivering it. CoCounsel’s citation ledger creates a verifiable evidence trail.

But as Above the Law noted, the competitive pressure to be “more autonomous” could push vendors to reduce friction in ways that create real liability. There’s a spectrum from “pause at every step” (which defeats the point of autonomy) to “show me the final output” (which makes meaningful review impossible). The best agent architectures sit somewhere in the middle — surfacing the decisions that matter while handling routine steps autonomously. Where that line falls is, right now, a product design decision made by engineers, not a standard set by regulators or courts.

When a vendor says “human-in-the-loop,” ask: how many loops, and which decisions trigger them? A checkpoint after every step is an assistant with extra steps. A checkpoint only at the end is an agent you can’t supervise. The useful question is what triggers the pause.

What to Ask Before You Deploy#

If your firm is evaluating or deploying agentic AI tools, four questions cut through the marketing:

“Can I see every step the agent took?” Not just the final output with citations — every retrieval decision, every tool call, every point where the agent chose one path over another. If the vendor can’t show you the intermediate steps, you can’t supervise the work product, and you can’t defend it in court.

“What happens when the agent encounters something it can’t classify?” This is the real quality test. Every vendor demo shows the agent flawlessly preparing a privilege log in minutes. Ask instead about failure modes: contradictory instructions, ambiguous documents, edge cases. Does the agent escalate? Guess? Skip?

“Where are the human checkpoints, and what triggers them?” Not all checkpoints are equal. Pre-defined thresholds (“pause if confidence is below 80%”) are better than fixed intervals (“pause every ten documents”). Checkpoints that surface the agent’s reasoning at the decision point are better than checkpoints that just show you the decision.

“How does this interact with our ethical walls and conflicts system?” If the agent can access your document management system, it needs to respect every information barrier your firm has in place — not just at the session level, but at every retrieval step. Harvey’s Intapp integration is the first purpose-built solution. If your vendor doesn’t have an answer here, your agent is a malpractice risk.

Further Reading#

- How Autonomous Agents Will Transform Legal (Harvey). Harvey co-founder Gabe Pereyra’s thesis on why legal is the next domain for autonomous agents.

- Long Horizon Agents and Ethical Walls (Harvey). The technical framework for why existing information barriers break down with agentic AI.

- The Rise of Agentic AI in Legal Technology (PlatinumIDS). Comprehensive analysis of every major vendor’s agentic AI launch, with evaluation criteria.

- Next Gen CoCounsel to Offer “Fiduciary-Grade” Legal AI (Artificial Lawyer). Thomson Reuters’ announcement of the Claude Agent SDK-powered CoCounsel rebuild.

- Building Agents with the Claude Agent SDK (Anthropic, January 2026). The technical architecture underlying CoCounsel’s next-generation platform.

- The 2025 AI Agent Index (Staufer et al., Cambridge/MIT/Harvard/Stanford). Systematic evaluation of safety and transparency across 30 deployed agentic AI systems.

- Autonomous AI in Law Firms: What Could Possibly Go Wrong? (Above the Law). Cybersecurity risks specific to autonomous agents in legal environments.

- State AI Laws — Where Are They Now? (Cooley). Current status of every major U.S. state AI law, including Colorado, New York, and California.

- EU AI Act Regulatory Framework (European Commission). Official timeline and requirements for high-risk AI systems, including legal applications.

- OpenClaw: The Rise of an Open-Source AI Agent Framework (clawbot.blog). Technical deep dive on OpenClaw’s growth, security incidents, and the broader agent ecosystem.

This post is part of the The Legal AI Landscape series on LegalRealist AI. It is intended for informational and educational purposes only and does not constitute legal advice. AI capabilities, vendor claims, and regulatory timelines described here reflect publicly available information as of the publication date and are subject to rapid change. Laws governing AI use vary by jurisdiction.