TL;DR

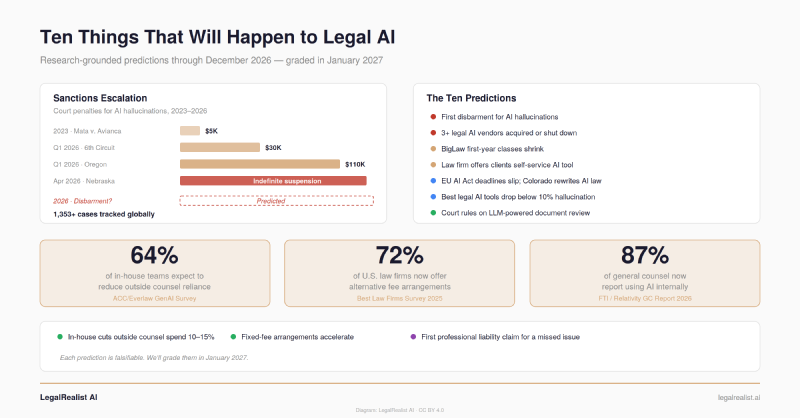

- Courts have sanctioned lawyers $145,000 for AI hallucinations in recent months alone. The escalation from fines to suspensions to disbarment is a matter of months, not years.

- At least three legal AI vendors will be acquired or shut down. Point solutions that raised seed rounds in 2023–2024 are hitting the wall between traction and runway.

- BigLaw will hire fewer first-years even as total associate headcount grows. Only 35% of large firms plan to increase first-year class sizes through 2027, while 86% plan to grow their overall associate ranks.

- In-house departments will cut outside counsel spend 10–15% through AI-powered insourcing. The ACC/Everlaw survey shows 64% of in-house teams expect to reduce reliance on outside counsel — and they’re building the tools to do it.

- Run your own benchmark before year-end. Most of these predictions will affect your practice. An hour of testing with your own documents tells you more than any forecast.

Most legal AI commentary hedges. “AI could transform the profession” — or it might not. “Firms may need to adapt” — but who knows when. Predictions framed as possibilities aren’t predictions. They’re atmosphere.

This post does something different: it commits ten specific, falsifiable predictions to paper. Not “AI will change things” — concrete claims about what will happen to courts, firms, vendors, clients, and regulators before the end of 2026. Each one is grounded in data that already exists: enforcement trends, hiring surveys, deal activity, regulatory timelines, and benchmark results. Some will be wrong. That’s the point. Vague predictions can’t be wrong, which means they can’t be useful either.

We’ll grade this post in January 2027 and publish the scorecard. In the meantime, here’s the case for each one.

Nebraska recently ordered the first indefinite license suspension for AI-hallucinated citations. Sullivan & Cromwell sent an emergency letter to a federal bankruptcy judge shortly after, attaching a chart of fabricated cases its lawyers had submitted. Damien Charlotin’s database now tracks over 1,353 court cases globally involving AI-generated hallucinations — up from under 200 eighteen months ago. The profession is reorganizing around this technology faster than most commentary acknowledges — and the changes coming before year-end are less about what AI can do and more about how institutions respond to what it’s already doing.

The First Disbarment for AI-Hallucinated Citations#

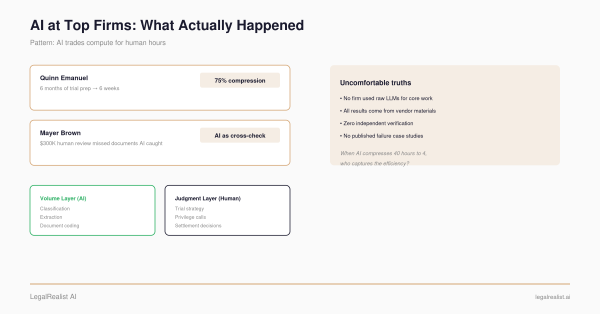

The sanctions escalation tells a clear story. In 2023, Mata v. Avianca produced a $5,000 fine. More recently, the Sixth Circuit imposed $30,000 in sanctions for fabricated citations. An Oregon court levied $110,000 — the largest single AI Hallucination penalty on record. Nebraska ordered an indefinite license suspension. Courts are now stacking remedies: Rule 11 sanctions, contempt findings, and bar referrals from a single incident.

The trajectory — warnings, fines, suspensions — has one destination left. A full disbarment will likely involve a repeat offender or an attorney who attempted to conceal the AI’s role, as in the Nebraska case where the lawyer initially denied using AI before admitting it was a “grave error of judgment.” The Fifth Circuit has already signaled that using enterprise legal AI tools doesn’t mitigate sanctions: an attorney sanctioned $2,500 had used vLex and CoCounsel.

At Least Three Legal AI Vendors Will Be Acquired or Shut Down#

The consolidation has already started. Legora acquired Walter shortly after raising $550 million. Thomson Reuters bought Noetica recently. Litera’s Dennis Garcia described the dynamic plainly: the legal technology market is crowded, competition is intense, and more M&A is inevitable.

The math driving consolidation is simple. Legal AI startups that raised seed or Series A rounds in 2023–2024 are 18–24 months in. The ones without meaningful revenue traction face a choice: find a buyer or shut down. Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027 due to escalating costs or unclear business value. Forrester projects that enterprises will defer 25% of planned AI spend into 2027 due to ROI concerns.

Expect at least one acquisition above $500 million — likely a major publisher or enterprise software company buying a legal AI platform to add workflow capabilities. Point solutions that do one thing well but lack distribution or platform economics are the most vulnerable.

First-Year Associate Classes Will Shrink at BigLaw Firms#

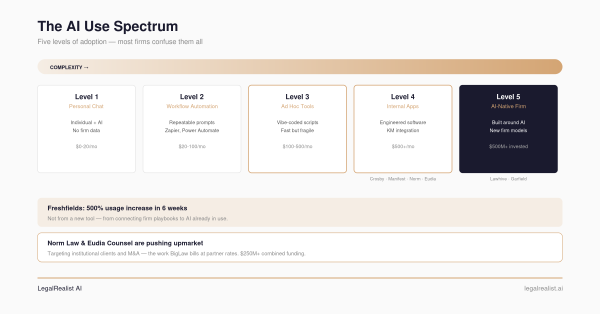

Here’s the data point that hasn’t gotten enough attention. Law360 reported in December 2025 that 86% of large law firms plan to increase their total associate ranks through 2027 — but only 35% plan to increase the size of their first-year classes. That 51-percentage-point gap tells you exactly where the leverage model is heading: more senior associates, fewer juniors.

The logic is straightforward. AI absorbs the work that historically justified large first-year classes: document review, initial research, routine drafting. Harvard CLP documented one AmLaw 100 firm reducing complaint response time from 16 hours to 3–4 minutes. That’s not a task that needs a first-year anymore. Ropes & Gray now asks summer associate applicants to explain what they’re doing daily to keep up with AI development — a signal that AI fluency is becoming a hiring filter, not a nice-to-have.

This doesn’t mean fewer lawyers overall. It means fewer entry points into BigLaw, with the ones that remain demanding different skills. The NALP employment data for the class of 2024 showed record employment rates — but median law firm starting salaries dipped 3%, a subtle signal that the hiring market may already be softening at the entry level.

A Major Law Firm Will Offer Clients a Self-Service AI Tool#

The pieces are in place. Legora’s Portal already creates shared AI workspaces between firms and clients — Linklaters, Cleary Gottlieb, and Goodwin signed on as design partners. Wilson Sonsini’s Chief Innovation Officer predicted a proliferation of self-serve AI tools from law firms for narrow, repeatable use cases.

The business case is defensive. If a corporate client can use GC AI or a general-purpose LLM to handle NDA review internally, the firm loses that work entirely. If the firm builds a branded, playbook-constrained tool and offers it to the client directly — covering standard contract review, compliance checklists, or regulatory screening — the firm retains the relationship and the fees for anything the tool escalates. The first firm to do this credibly converts a cost center (routine advisory work clients are already insourcing) into a client retention mechanism.

The EU AI Act’s High-Risk Deadlines Will Slip — and Colorado Will Rewrite Its AI Law#

Both were supposed to arrive this year. Neither will arrive as written.

The European Commission’s November 2025 “Digital Omnibus” proposal is pushing key AI Act compliance deadlines toward 2027–2028. The amendments must be adopted before August, or the original dates apply — but EU institutions are actively negotiating extensions. In the U.S., Colorado’s AI Policy Work Group released a recent proposal to repeal and replace much of SB 205, resetting the effective date to January 2027. The proposal narrows the law’s scope, replacing “high-risk AI systems” with “covered automated decision-making technology” and limiting what counts as a “consequential decision.”

The pattern is the same on both continents: comprehensive AI laws written in 2023–2024 are colliding with the reality that compliance regimes need standards that don’t exist yet, enforcement infrastructure that hasn’t been built, and categories that don’t map cleanly onto how AI is actually deployed. The regulatory trend for the rest of the year is delay-and-narrow, not repeal. Both laws will eventually take effect — but not on the timeline their drafters imagined.

Hallucination Rates for the Best Legal AI Tools Will Drop Below 10%#

Stanford’s 2024 testing found Lexis+ AI hallucinated 17% of the time — the best rate among tools tested. Westlaw AI-Assisted Research hit 34%. Since then, foundation models have improved substantially, Graph RAG architectures have matured, and citation verification pipelines have tightened. A March 2025 randomized controlled trial found RAG-based tools achieving productivity gains of 38–115% while maintaining Hallucination rates comparable to non-AI human work.

The best publisher tools — CoCounsel with KeyCite, Protégé with Shepard’s — will push below 10% on citation accuracy by year-end. But the harder problem is mischaracterization: citing a real case while misstating its holding. Citation verification catches reversed or overruled cases. It doesn’t catch subtle misrepresentation — and that category of error will remain stubbornly high. This gap will become the primary quality differentiator between legal AI products, and the one most difficult for buyers to evaluate without hands-on testing.

Courts Will Rule on the Defensibility of LLM-Powered Document Review#

Courts accepted technology-assisted review in 2012 (Da Silva Moore) and 2015 (Rio Tinto). Those opinions addressed predictive coding — supervised machine learning trained on attorney seed sets. LLM-powered first-pass review is a different technology with different failure modes, and no published opinion has specifically addressed it.

With Relativity aiR now bundled into standard RelativityOne pricing (reaching 300,000+ users), Everlaw processing millions of documents per hour, and Syllo handling first-pass review on matters like the Desktop Metal trial, the volume of LLM-classified documents entering litigation is growing exponentially. A privilege blow — an inadvertent production of a privileged document flagged as non-privileged by an LLM — will force a court to address whether LLM-based classification meets the “reasonable inquiry” standard under the Federal Rules.

The ruling will likely be favorable. Courts have generally embraced technology-assisted review. But it will establish specific requirements: what validation protocols satisfy reasonableness, what disclosure is required about AI’s role in the review, and how error rates should be documented for defensibility.

In-House Departments Will Cut Outside Counsel Spend 10–15%#

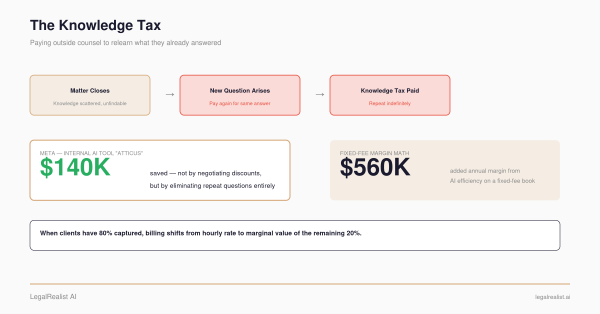

The ACC/Everlaw GenAI Survey found 64% of in-house teams expect to depend less on outside counsel because of AI capabilities they’re building internally. 87% of general counsel now report using AI within their departments, up from 44% in recent years. Meta saved $140,000 on a single category of repeat queries by building an internal AI assistant. GC AI reports a 14% average reduction in outside counsel spend among its customers — roughly $252,000 annually for a median legal department.

The reduction won’t come from rate negotiation. It will come from eliminating categories of work that go outside the building: standard contract review, routine regulatory questions, first-pass research, template drafting. As Google X’s Alex Ponce de Leon described it at the latest Legalweek, generative AI is enabling in-house teams to become augmented legal advisors, reserving outside counsel for truly complex, high-stakes work. Firms that don’t adapt their pricing models will see it in 2027 panel reviews.

Fixed-Fee Arrangements Will Accelerate — Driven by AI Economics#

72% of U.S. law firms already offer some form of alternative fee arrangement, rising to 90% among firms with 50+ lawyers. 71% of legal consumers prefer flat fees. But most AFA usage is concentrated in routine transactional work — the billable hour still dominates high-value litigation and advisory.

AI is changing the arithmetic. Under hourly billing, a tool that reduces a task from eight hours to two costs the firm six hours of revenue. Under a fixed fee, the same tool converts those six hours into margin. Clio’s data shows firms with wide AI adoption are nearly three times more likely to report revenue growth. Duane Morris published a detailed argument for fixed fees in recurring securities law work — a practice area that historically billed hourly. The argument isn’t ideological; it’s that fixed fees align incentives with AI adoption while hourly billing fights it.

The prediction isn’t that hourly billing dies. It won’t — not for high-stakes, unpredictable litigation. The prediction is that fixed-fee arrangements expand from routine work into recurring advisory and compliance work that AI makes more predictable, and that this expansion accelerates as clients demand AI-driven pricing concessions in 2027 panel reviews.

A Legal AI Product Will Face Its First Professional Liability Claim for a Missed Issue#

Everyone is watching for hallucinated citations. The higher-stakes failure mode is what the AI doesn’t flag: a buried change-of-control provision in a 200-page credit agreement, a regulatory deadline in a footnote, an indemnification cap that contradicts the term sheet.

As firms increase reliance on AI for first-pass review and reduce the human hours allocated to the same work, the probability of a consequential miss rises. The claim won’t target the model provider — it will target the firm that relied on the tool without adequate verification, and possibly the vendor under a breach-of-warranty or negligence theory. The Sullivan & Cromwell incident demonstrated that even firms with comprehensive AI policies, training requirements, and citation review procedures can fail to catch AI errors. Apply that dynamic to a transactional context — where a missed term doesn’t embarrass the firm in court but costs the client money — and the liability exposure is clear.

This is the risk that no Benchmark measures and no vendor addresses in their marketing materials. When a tool’s accuracy is 95%, the question is what’s in the other 5% — and whether anyone was assigned to look.

Run Your Own Benchmark Before Year-End#

Most of these predictions will touch your practice before December. The firms and departments that navigate them well won’t be the ones who predicted the future correctly — they’ll be the ones who tested their tools against their own work.

Pick a task you’ve already completed. Pull your answer key. Give the same task to two or three models. Grade blind. Calculate whether the cost difference justifies the quality difference at your volume. An hour of testing with your own documents tells you more than any forecast — including this one.

Further Reading#

- AI Hallucination Cases Database. Damien Charlotin’s tracker of 1,353+ court filings with AI-generated fabrications.

- The 2026 Legal AI Reckoning. Case-by-case breakdown of every major 2026 hallucination sanction.

- Legal M&A Trends Q2 2026. AI consolidation and platform expansion in the legal industry.

- Law Firms’ Junior Roles At Risk From AI. Law360 survey on associate hiring plans through 2027.

- Hallucination-Free? (Stanford HAI). Independent testing of RAG-based legal AI tools (17–34% hallucination rates).

- State AI Laws — Where Are They Now?. Cooley’s tracker of the evolving U.S. AI regulatory landscape.

- 2026 Year in Preview: AI Regulatory Developments. Wilson Sonsini’s top 10 AI regulatory developments to watch.

- Law Firms Embrace AFAs, But Clients Want More Flexibility. Best Law Firms’ survey on alternative fee arrangement adoption.

- Google X’s Discovery Leader on Gen AI and Outside Counsel. How insourcing is reshaping the legal department relationship.

- 85 Predictions for AI and the Law in 2026. National Law Review’s expert survey.

This is a standalone post on LegalRealist AI. It is intended for informational and educational purposes only and does not constitute legal advice. Predictions reflect publicly available data and identified trends as of the publication date; outcomes are inherently uncertain. AI capabilities, regulatory timelines, and market conditions described here are subject to rapid change. Laws governing AI use vary by jurisdiction.