TL;DR

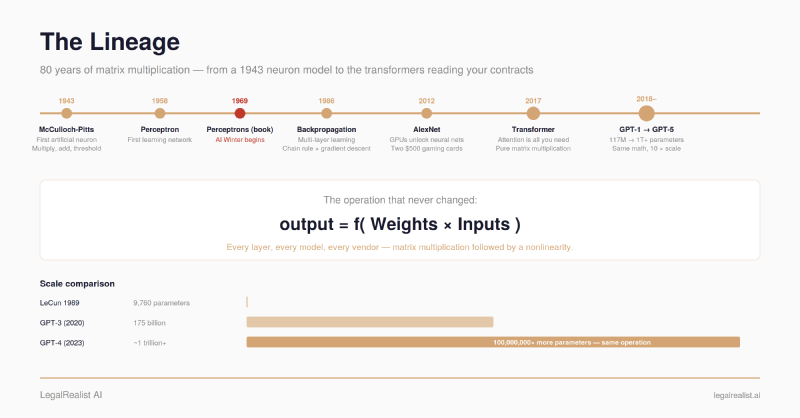

- Every AI model is matrix multiplication. The same weighted-sum-and-threshold operation described in 1943 — scaled up by a factor of 100 million — is the operation running on every GPU in every data center powering today’s AI tools.

- The field went through an AI winter. Minsky and Papert’s 1969 proof that single-layer networks can’t solve basic problems froze funding for nearly two decades. Backpropagation resurrected it in 1986 — and remains how every model learns today.

- Transformers didn’t invent attention — they removed everything else. The 2017 architecture bet that powers every frontier model is pure matrix multiplication, which is why it maps so perfectly onto GPU hardware.

- Scale turned architecture into engineering — until it hit a ceiling. Scaling laws showed that performance improves predictably with more compute, but each gain costs more than the last. The industry may be running out of data before it runs out of GPUs.

- The next architecture will win when it finds its hardware match. The transformer won because GPUs were already optimized for matrix multiplication. Chipmakers responded by building silicon purpose-designed for the workload. The pattern repeats.

In 2022, Andrej Karpathy — Tesla’s former AI director and an OpenAI co-founder — reproduced a 1989 paper by Yann LeCun that trained a small neural network to recognize handwritten zip code digits. The network had 9,760 trainable parameters and took three days to train on a SUN-4/260 workstation. Karpathy’s MacBook Air finished in 90 seconds. The code is on GitHub — clone the repo, run python repro.py, and train the same fundamental approach that underpins every modern

LLM in about a minute and a half on any laptop. His reflection: “Everything reads remarkably familiar, except it is smaller.”

LeCun’s 1989 network and the

large language models powering today’s legal AI tools share the same fundamental operation. The difference is scale: GPT-4 has an estimated trillion-plus parameters — a 100-million-fold increase. The math hasn’t changed. The electricity bill has.

This post traces the technical lineage from a 1943 neuron model to the Transformer architecture powering today’s AI tools. It’s the first post in our History of AI series. For who builds these models, what they cost, and how to evaluate them, see The Foundation.

The Neuron as Arithmetic#

In 1943, neurophysiologist Warren McCulloch and logician Walter Pitts published “A Logical Calculus of the Ideas Immanent in Nervous Activity.” The paper proposed a mathematical model of a biological neuron: take some inputs, multiply each by a weight, add them up, and fire if the sum exceeds a threshold. Pitts was eighteen and homeless when McCulloch invited him to live with his family in 1942. The paper they co-wrote was published a year later. It received almost no attention until John von Neumann and Norbert Wiener picked up the ideas years later.

The name “neural network” invites a misunderstanding worth clearing up. These systems were inspired by neuroscience — I first encountered the connection in a cognitive science class on visual systems, where we studied how David Hubel and Torsten Wiesel mapped the hierarchical structure of the visual cortex in the late 1950s and 1960s, work that directly influenced later network architectures. But an artificial neural network is a mathematical abstraction, not a simulation. A biological neuron has thousands of synapses, operates through electrochemical signaling, and behaves in ways neuroscience still doesn’t fully understand. An artificial neuron multiplies inputs by weights and sums them. The math borrowed a structural idea from biology, then scaled it to a trillion parameters.

The McCulloch-Pitts neuron did one thing: multiply and add. Each input gets multiplied by a weight that represents its importance, the products get summed, and the result passes through a threshold function that outputs 1 or 0. In modern notation: output = f(w₁x₁ + w₂x₂ + … + wₙxₙ). That multiply-and-add operation is the atomic unit of every neural network ever built.

In 1958, psychologist Frank Rosenblatt built the Perceptron, the first neural network that could learn. McCulloch and Pitts’s neuron had fixed weights chosen by the designer. Rosenblatt’s Perceptron adjusted its weights automatically based on whether its output was right or wrong. Feed it a set of training examples, and it gradually tunes its weights to produce correct classifications. The New York Times reported that the Navy expected it to “walk, talk, see, write, reproduce itself and be conscious of its existence.” The actual system classified simple visual patterns.

Here’s what matters for understanding modern AI: a single layer of perceptrons processing a batch of inputs is literally a matrix multiplication. Stack the inputs into rows of a matrix, stack the weights into columns, multiply the two matrices together, and you get all the outputs at once. This isn’t a metaphor. It’s the exact operation. When NVIDIA sells a $40,000 GPU to a data center running legal AI workloads, the chip spends 80–90% of its time on matrix multiplication. The operation McCulloch and Pitts described in 1943 is the operation your vendor is paying to run billions of times per second.

Then, in 1969, MIT professors Marvin Minsky and Seymour Papert published Perceptrons, a book that triggered the first AI winter. They proved mathematically that a single-layer perceptron cannot solve any problem where the classes aren’t separable by a straight line — including the trivially simple XOR function (output 1 when exactly one of two inputs is 1, otherwise output 0). They acknowledged that multi-layer networks could theoretically solve these problems, but noted that no one knew how to train them. Funding agencies read the book, concluded neural networks had no future, and redirected money to symbolic AI. Neural network research entered a nearly two-decade winter.

Learning to Learn#

The winter broke in 1986. David Rumelhart, Geoffrey Hinton, and Ronald Williams published “Learning representations by back-propagating errors” in Nature, demonstrating that multi-layer networks could learn through backpropagation: run an input forward through the network, compare the output to the correct answer, calculate the error, then propagate that error backward through each layer using the chain rule from calculus, adjusting every weight proportionally to how much it contributed to the mistake. The idea wasn’t entirely new — Paul Werbos had described it in his 1974 PhD thesis — but the 1986 paper landed with compelling experiments and impeccable timing.

Backpropagation solved the problem Minsky and Papert had identified: how to train networks deeper than one layer. It remains how every neural network learns today. The 2017 Transformer paper, RLHF, Fine-Tuning — all refinements of what the network learns and how it’s optimized. The underlying algorithm is still the chain rule, running backward through hundreds of layers instead of three.

The GPU Moment#

Backpropagation worked, but for two decades it couldn’t scale. Neural networks remained small, slow, and frequently outperformed by simpler methods like support vector machines. The bottleneck was compute: training a network means running millions of matrix multiplications, and CPUs process them sequentially.

On September 30, 2012, Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton entered AlexNet — a deep neural network — in the ImageNet Large Scale Visual Recognition Challenge and demolished the competition: 15.3% top-5 error rate versus 26.2% for the runner-up. The architectural innovations were incremental — deeper layers, ReLU activation functions, dropout regularization. The real breakthrough was training on GPUs. Two NVIDIA GTX 580 graphics cards — $500 consumer gaming hardware — turned out to be spectacularly good at the one thing neural networks need most: massively parallel matrix multiplication.

AlexNet proved that neural networks, given enough data and enough parallel compute, could outperform every hand-engineered approach. The AI spring that followed — and that we’re still in — dates to that September. Jensen Huang has said that AlexNet is how NVIDIA got into AI: once the company realized deep learning could run on its chips, it redirected its R&D accordingly. The GPU that was designed to render video game graphics became the engine of the entire AI industry.

The Sequence Problem#

Vision is spatial. Language is sequential. Word order matters — “The firm represented the plaintiff” means something entirely different reversed. Recurrent neural networks (RNNs) tried to handle this by passing a hidden state forward after each Token, but gradients shrank exponentially over long sequences — the network forgot the start of a document by the time it reached the end. LSTMs (Hochreiter & Schmidhuber, 1997) added gates that let the network selectively remember and forget, and dominated NLP for two decades — Google Translate, Siri, Alexa all ran on them. But LSTMs still process tokens one at a time. You can’t parallelize them.

In 2014, Dzmitry Bahdanau and colleagues published “Neural Machine Translation by Jointly Learning to Align and Translate”, introducing the attention mechanism. The problem it solved was concrete: in an LSTM-based translation system, the encoder reads an entire input sentence and compresses it into a single fixed-length vector — a bottleneck that forces the model to cram everything it knows about a 50-word sentence into a few hundred numbers. Bahdanau’s insight was to let the decoder look back at every position in the input at each step of generating the output, assigning a learned weight to each position based on relevance. Translating the subject of a sentence? Attend heavily to the first few words. Translating a verb phrase near the end? Shift attention there. The model learned where to look, rather than trying to remember everything at once. It was the architectural seed of everything that came next.

Attention Is All You Need#

On June 12, 2017, a team from Google Brain and Google Research posted “Attention Is All You Need” (Vaswani et al.) to arXiv. The paper proposed a radical simplification: strip out recurrence entirely. No RNNs. No LSTMs. No sequential processing at all. Instead, build the entire network from attention mechanisms and feedforward layers. They called it the Transformer.

The key innovation was self-attention: every Token in the input attends to every other Token simultaneously. The intuition is straightforward. When you read the sentence “The bank approved the loan because its terms were favorable,” you know “its” refers to “the loan,” not “the bank.” You make that connection by considering how every word in the sentence relates to every other word. Self-attention is a mechanism that lets the model do the same thing — for every Token in the input, compute a relevance score against every other Token, then use those scores to build a representation that reflects the full context.

The mechanics work through three matrices — Queries (Q), Keys (K), and Values (V) — each derived from the input by multiplying it by a different learned weight matrix. Think of it this way: Q represents what each Token is looking for, K represents what each Token contains, and V represents the information each Token carries. Multiply Q by the transpose of K and you get a matrix of attention scores — a number for every pair of tokens indicating how much one should attend to the other. Multiply those scores by V and you get context-weighted representations: each Token’s output is a weighted mix of information from all other tokens, with the weights determined by relevance. Two matrix multiplications. That’s the whole mechanism.

This mattered for two reasons. First, because every Token attends to every other Token in parallel, the Transformer processes an entire sequence at once instead of word by word. A context window of 200,000 tokens gets processed in a single forward pass, with every position aware of every other position.

Second, because the core of self-attention is matrix multiplication, it maps perfectly onto GPU hardware. The same chips that made AlexNet possible in 2012 — designed for the parallel matrix operations needed to render video game graphics — turn out to be ideal for transformers. The architectural match between transformers and GPU hardware is a major reason this particular architecture won: not just because it works well, but because it runs fast on hardware that already existed.

The Transformer paper trained a 100-million-parameter model on eight NVIDIA P100 GPUs; the larger version trained in 3.5 days. It set a new state of the art in machine translation. Within a year, two divergent lineages emerged — and the split matters for understanding what today’s AI tools can and can’t do.

Google’s BERT (2018) used the

Transformer’s encoder. An encoder sees the entire input at once — attention flows in both directions, so every

Token’s representation reflects the full context. BERT was trained by masking random words in a sentence and predicting what was hidden, which forced it to build deep representations of meaning. That makes encoders powerful for understanding: search ranking, document classification,

Semantic Search, and the

Embeddings that power every

RAG pipeline. When a legal AI tool retrieves the five most relevant clauses from a 500-page contract, an encoder model (or its descendants) is almost certainly doing the matching.

OpenAI’s GPT-1 (2018) used the decoder — and this is the branch that became generative AI. A decoder is autoregressive: it sees only what came before, never what comes after, and is trained to predict the next

Token. That constraint is the entire mechanism behind text generation. The model produces one

Token, appends it to the input, then predicts the next, then the next — each choice conditioned on everything generated so far. It’s why these models can draft a memo, write a brief, or summarize a deposition: they generate language one

Token at a time, left to right, by repeatedly answering the question “what word comes next?” Every

LLM powering today’s legal AI tools — GPT-4, Claude, Gemini — descends from this decoder-only branch.

Scale Is All You Need#

What followed was one of the most expensive empirical experiments in computing history. OpenAI tested a hypothesis: if you keep making the same architecture bigger, it keeps getting better.

GPT-1 (2018) had 117 million parameters. GPT-2 (2019) scaled to 1.5 billion — a 13× increase that produced text coherent enough that OpenAI initially withheld the full model over concerns about misuse. GPT-3 (2020) jumped to 175 billion parameters, and something qualitatively new emerged: the model could perform tasks it was never explicitly trained on, learning from just a few examples provided in the prompt. Each order-of-magnitude increase in parameters unlocked capabilities that the previous scale couldn’t touch.

In 2020, Jared Kaplan and colleagues at OpenAI published “Scaling Laws for Neural Language Models”, showing that model performance improves as a smooth, predictable function of three variables: parameters, training data, and compute. The relationship held across seven orders of magnitude with no sign of saturation. This transformed LLM training from art into engineering: given a compute budget, you could calculate the optimal model size and training duration.

DeepMind’s Chinchilla paper (2022) refined the formula, showing that most models were undertrained — you need far more data per parameter than labs had been using. The industry responded by training smaller models on vastly more data, squeezing more capability out of less silicon.

Modern

frontier models add two more innovations. Mixture of Experts (MoE) architectures activate only a fraction of the network per

Token — DeepSeek-V3 has 671 billion total parameters but uses only 37 billion per

Token, routing inputs to specialized subnetworks. Post-training —

RLHF, instruction tuning, chain-of-thought reasoning — shapes behavior after pre-training. Many of the biggest performance gains in 2025–2026 come from this stage.

But underneath it all, every layer of every model is still a matrix multiplication followed by a nonlinearity.

The Ceiling#

Scaling laws are power laws, and power laws have a built-in problem: each unit of improvement costs more than the last. The first doubling of compute buys a large gain. The tenth doubling buys almost nothing.

The most fundamental ceiling may be the training objective itself. Every LLM is trained to predict the next Token — a loss function that optimizes for plausibility, not accuracy. A model that minimizes prediction loss learns which words tend to follow which. It doesn’t learn to verify whether its output is true — it learns to predict what a human would write next, including the patterns in how humans state things confidently regardless of accuracy.

Sutskever has argued that if the model is smart enough, predicting what a wise and capable person would say next might require genuinely understanding the world. But he’s also acknowledged the limit from a different direction — at NeurIPS 2024, he stated that “pre-training as we know it will unquestionably end.” Data is “the fossil fuel of AI,” and we’ve reached peak supply. Epoch AI projects that publicly available, high-quality human-generated text could be effectively exhausted by 2028, possibly sooner given how aggressively labs overtrain. You can repeat data, but each additional pass yields diminishing returns — most of the value is extracted in the first few epochs.

Karpathy’s 2025 year-in-review captures the resulting tension: LLMs are “simultaneously a lot smarter than I expected and a lot dumber than I expected.” The industry hasn’t realized even 10% of their potential at current capability — but the path to the next capability level isn’t just “more compute.” The responses so far: synthetic data (models generating training data for other models, with unresolved quality concerns), test-time compute (letting the model “think longer” during inference rather than training a bigger model), and reinforcement learning from verifiable rewards (training against problems with objectively correct answers, like math and code, which pushes models toward reasoning rather than pattern-matching). Whether any of these break through the ceiling or just push it higher is an open question. The scaling era isn’t over, but the easy gains are.

What Hasn’t Changed, and What Might#

The Transformer architecture is eight years old and essentially unchanged. Researchers are exploring state-space models, linear attention variants, and hybrid architectures that trade the Transformer’s quadratic attention cost for something cheaper at very long sequences. None has displaced it yet.

The history suggests a pattern: the architectures that win aren’t always the most theoretically elegant — they’re the ones that map best onto the hardware available at the time. The Transformer won because GPUs were already optimized for matrix multiplication. Chipmakers responded by building silicon purpose-designed for the workload. The next architecture will win when it finds its own hardware match — or when someone builds the chip it needs.

Further Reading#

- A Logical Calculus of the Ideas Immanent in Nervous Activity (McCulloch & Pitts, 1943). The paper that started it all.

- Perceptrons (Minsky & Papert, 1969). The book that nearly ended it.

- Learning representations by back-propagating errors (Rumelhart, Hinton & Williams, 1986). The Nature paper that resurrected neural networks.

- Backpropagation Applied to Handwritten Zip Code Recognition (LeCun et al., 1989). The earliest real-world application of backprop.

- Deep Neural Nets: 33 years ago and 33 years from now (Karpathy, 2022). Reproducing LeCun 1989 and reflecting on what changed (not much) and what scaled (everything).

- Attention Is All You Need (Vaswani et al., 2017). The Transformer paper.

- Scaling Laws for Neural Language Models (Kaplan et al., 2020). The empirical discovery that performance scales predictably with compute.

- Training Compute-Optimal Large Language Models (Hoffmann et al., 2022). The Chinchilla paper — most models were undertrained.

- The Illustrated Transformer (Jay Alammar). The best visual walkthrough of Transformer architecture.

This post is part of the History of AI series on LegalRealist AI. It is intended for informational and educational purposes only and does not constitute legal advice. AI capabilities and architectural details described here reflect publicly available research as of the publication date and are subject to rapid change.